The Fiddler AI Approach to Measuring Data Drift

In the rapidly evolving world of AI, understanding and managing data drift is crucial for maintaining the robustness and accuracy of AI models.The AI Science team at Fiddler AI has developed a patented novel method to measure drift using text embeddings from large language models (LLMs). This approach, detailed in our research paper, stands out for its ability to capture subtle shifts in data distributions effectively.

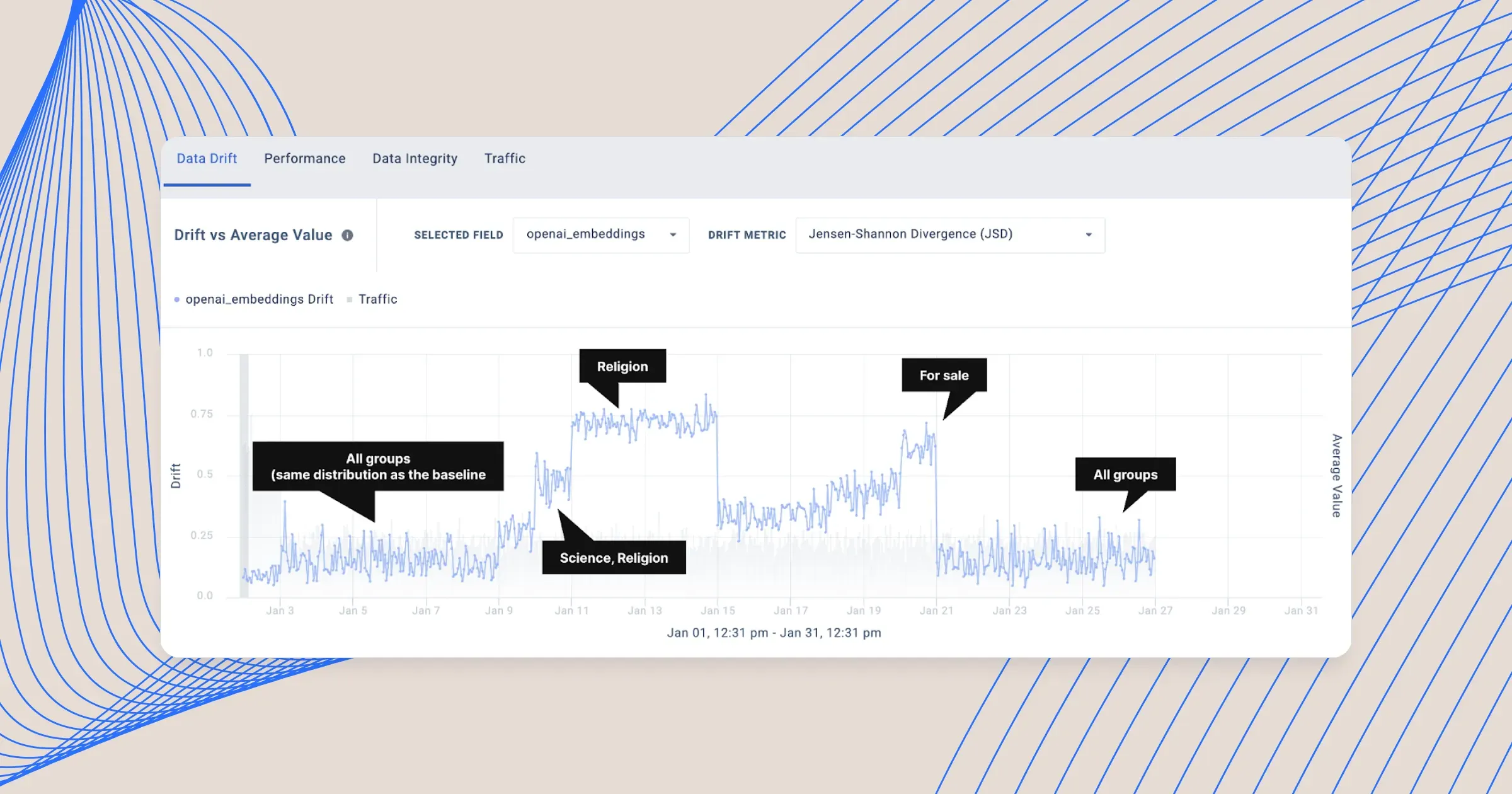

In short, we used a data-driven approach to detect high density regions in the embedding space of the baseline data, and tracked how the relative density of such regions changes over time. The details of our clustering-based approach have been previously published in a 3-part blog series that demonstrate Fiddler’s method of measuring distributional shifts for language, vision, and text embeddings5, 6, 7 for LLMOps.

In this blog post we will highlight the advantage of using large language models (LLMs) for monitoring data drift, and we will deep dive into empirical evaluations using real-world datasets to provide concrete evidence of the effectiveness of the ideas presented in our most recent paper.1

The Challenge of Data Drift in Natural Language Processing

Before diving into the research findings, let's talk about why monitoring data drift is important. In the AI ecosystem, data drift refers to the change in model input/output over time, which can lead to performance degradation of AI systems. Detecting and addressing these shifts are pivotal for any AI-driven organization.

Natural language processing (NLP) models are increasingly being used in ML pipelines, and the advent of new AI models, such as LLMs, has greatly extended the adoption of NLP solutions in different domains. Consequently, the problem of distributional shift (“data drift”) discussed above must be addressed for NLP data to avoid performance degradation after deployment.2, 3, 4

Examples of data drift in NLP data include:

- The emergence of a new topic in customer chats

- The emergence of spam or phishing email messages that follow a different distribution compared to that used for training the detection models

- Differences in the characteristics of prompts issued to an LLM application during deployment compared to the characteristics of prompts used during testing and validation

Comparing Drift Sensitivity of Different Embeddings

As in many other NLP problems, having access to high-quality text embeddings is crucial for measuring data drift as well. The more an embedding model is capable of capturing semantic relationships, the higher the sensitivity of clustering-based drift monitoring. Recent developments in LLMs have shown that the embeddings that are generated internally by such models are very powerful and capable of capturing semantic relationships. In fact, some LLMs are specifically trained to provide general-purpose text embeddings.

We performed an empirical study for comparing the effectiveness of different embedding models in measuring distributional shifts in text. Our experiments simulated various data drift scenarios, providing a comprehensive view of how different embedding models respond to changes in data. We used three real-world text datasets (20 Newsgroups, Civil Comments, Amazon Fine Food Reviews) and applied our proposed clustering-based algorithm to measure both the existing drift in the real data and the synthetic drift that we introduced by modifying the distribution of text data points. We compared multiple embedding models (both traditional and LLM-based models) and measured their sensitivity to distributional shifts at different levels of data drift.

More specifically, we observed that across the three different real-world datasets:

- The drift sensitivity of Word2Vec is generally poor.

- Term Frequency-Inverse Document Frequency (TF-IDF) and Bidirectional Encoder Representations from Transformers (BERT) perform well in certain scenarios and poorly in others.

- The other embedding models — Universal Sentence Encoder, Ada-001, and Ada-002 — all have good performance, though none of them was consistently the best.

- Sensitivity improves (approximately) monotonically with the number of bins, k, but reaches a point of diminishing returns when k is between 6 and 10 across all datasets and models tested.

- Increasing the size of the embedding vector also improves model performance monotonically, beginning to saturate around 256 components.

- For models with large embedding sizes (e.g., Ada-002), drift saturation occurs at a much lower number of dimensions when sampled randomly. This suggests that their sensitivity is not directly connected to their embedding size and that significant redundancy may be present.

Impact of Clustering and Embedding Dimensions

Our research also explored the impact of different parameters, like the number of clusters and embedding dimensions, on the sensitivity of drift detection.

Real-World Applications and Implications

Our findings have implications, especially for industries relying heavily on text data, such as finance, healthcare, and e-commerce. By adopting our clustering-based drift monitoring method, organizations can better monitor subtle changes in their AI models, and know where exactly the need for updates and retraining are needed.

Paving the Way for Robust AI Systems

In our research paper, we introduced sensitivity to drift as a new evaluation metric for comparing embedding models and demonstrated the efficacy of our approach over different real-world datasets. Our findings shed light on a novel application of LLMs — as a promising approach for drift detection, especially for high-dimensional data. At Fiddler AI, we're committed to pushing the boundaries of AI safety and trust, and this research is a testament to that commitment.

Read the full research paper here.1

Acknowledgements: We thank Bashir Rastegarpanah for writing the majority of this blog, the rest of the Fiddler team for insightful discussions, and Karen He, Thuy Pham, and Kirti Dewan for reviewing the blog and providing feedback. The underlying technical work was done by Gyandev Gupta, Bashir Rastegarpanah, Amal Iyer, Josh Rubin, and Krishnaram Kenthapadi.

———

References

- Gyandev Gupta, Bashir Rastegarpanah, Amalendu Iyer, Joshua Rubin, Krishnaram Kenthapadi, Measuring Distributional Shifts in Text: The Advantage of Language Model-Based Embeddings, arxiv, 2023

- Xuezhi Wang, Haohan Wang, Diyi Yang, Measure and Improve Robustness in NLP Models: A Survey, NAACL 2022

- Amal Iyer, Krishnaram Kenthapadi, Introducing Fiddler Auditor: Evaluate the Robustness of LLMs and NLP Models, Fiddler AI Blog, May 2023

- Amit Paka, Krishna Gade, Krishnaram Kenthapadi, The Missing Link in Generative AI, Fiddler AI Blog, April 2023

- Bashir Rastegarpanah, Monitoring natural language processing and computer vision models, Part 1, Fiddler AI Blog, October 2022

- Amal Iyer, Monitoring Computer Vision Models using Fiddler, Fiddler AI Blog, December 2022

- Bashir Rastegarpanah, Monitoring Natural Language Processing Models -- Monitoring OpenAI text embeddings using Fiddler, Fiddler AI Blog, February 2023