Fiddler Trust Service for LLM Application Monitoring and Guardrails

How Fiddler Trust Service Strengthens AI Guardrails and LLM Monitoring

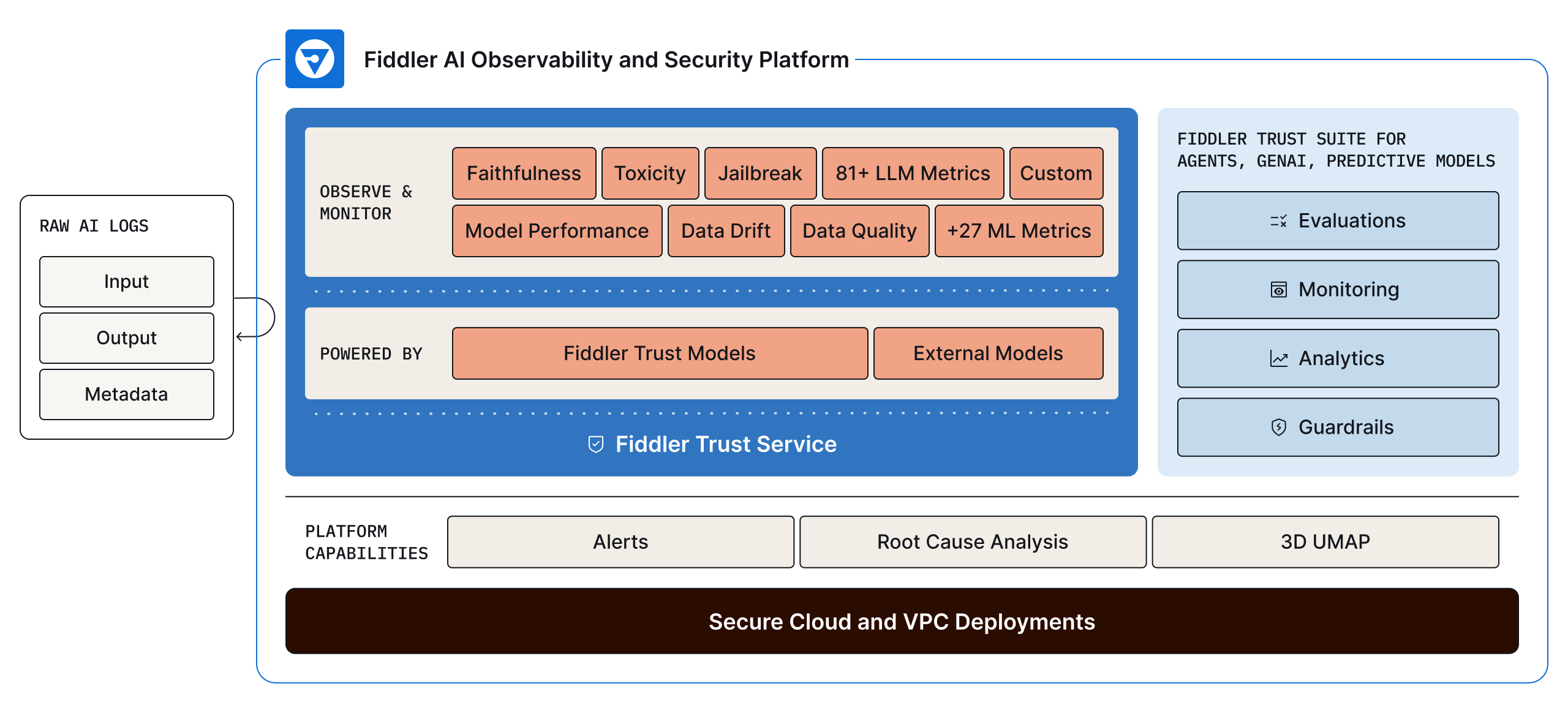

As part of the Fiddler AI Observability and Security platform, the Fiddler Trust Service is an enterprise-grade solution designed to strengthen AI guardrails and LLM monitoring, while mitigating LLM security risks. It provides high-quality, rapid monitoring of LLM prompts and responses, ensuring more reliable deployments in live environments.

Powering the Fiddler Trust Service are proprietary, fine-tuned Fiddler Trust Models, designed for task-specific, high accuracy scoring of LLM prompts and responses with low latency. Trust Models leverage extensive training across thousands of datasets to provide accurate LLM monitoring and early threat detection, eliminating the need for manual dataset uploads. These models are built to handle higher traffic and inferences as LLM deployments scale, ensuring data protection in all environments — including air gapped deployments — and offering a cost-effective alternative to closed sourced models.

Fiddler Trust Models deliver guardrails that moderate LLM security risks, including hallucinations, toxicity, and prompt injection attacks. They also enable comprehensive LLM monitoring and online diagnostics for Generative AI (GenAI) applications, helping enterprises maintain safety, compliance, and trust in AI-driven interactions.

Fiddler Trust Models are Fast, Cost-Effective, and Accurate

Key LLM Metrics Scoring for Guardrails and Monitoring

With the Fiddler Trust Service, you can score an extensive set of metrics, ensuring your LLM applications deliver the most advanced LLM use cases and stringent business demands. At the same time, it safeguards your LLM applications from harmful and costly risks.

- Faithfulness / Groundedness

- Answer relevance

- Context relevance

- Conciseness

- Coherence

- PII

- Toxicity

- Jailbreak

- Sentiment

- Profanity

- Regex match

- Topic

- Banned keywords

- Language detection

Fiddler Trust Service: The Solution for LLM Applications in Production

Protect and Secure with Guardrails

- Safeguard your LLM applications with guardrails that instantly moderate risky prompts and responses.

- Customize guardrails to your organization’s risk standards by defining and adjusting thresholds for key LLM metrics specific to your use case.

- Proactively mitigate harmful and costly risks, such as hallucinations, toxicity, safety violations, and prompt injection attacks, before they impact your enterprise or users.

Monitor and Diagnose LLM Applications

- Use Fiddler’s Root Cause Analysis to uncover the full set of moderated prompts and responses within a specific time period.

- Fiddler’s 3D UMAP visualization enables in-depth data exploration to isolate problematic prompts and responses.

- Share this list with Model Development and Application teams to review and enhance the LLM application, preventing future issues.

Analyze Key LLM Insights for Business Impact

- Analyze LLM metrics with customized dashboards and reports.

- Track the key LLM metrics that matter most for your use case and stakeholders, driving business-critical KPIs.

- Gain oversight to meet AI regulations and compliance standards.

LLM Scoring for High-Impact Use Cases

Featured Resources

Frequently Asked Questions

What are Fiddler Trust Models?

Trust Models are purpose-built, fine-tuned evaluation models that ship with the Fiddler Trust Service. They score agent and LLM outputs for safety, accuracy, and compliance directly in your environment, with no external API calls. Think of them as batteries-included evaluation: they work out of the box, return results in under 100ms, and don't add a line item to your LLM provider's invoice.

What do Trust Models evaluate?

Trust Models cover the metrics that matter most in production: hallucination and faithfulness, jailbreak detection, toxicity, safety (harassing, harmful, hateful, illegal, racist, sexist, sexual, unethical, violent), and sensitive information detection including 35+ PII entity types and healthcare-specific entities for HIPAA compliance. You get full coverage without building or maintaining your own evaluation prompts.

How do Trust Models compare to external LLM evaluation calls?

LLM-as-a-Judge is a common evaluation technique that uses general-purpose models like GPT-4 to score AI outputs. Those models are optimized for generation, not evaluation: they're good at producing language, not judging it. Trust Models are trained specifically for scoring, which means higher precision on evaluation tasks, sub-100ms latency (vs. seconds for an external API call), and no per-evaluation cost. The only scenario where general-purpose models have an edge is complex chain-of-thought reasoning, which represents a small fraction of what production evaluation actually requires.

Can I use Trust Models alongside my own custom evaluations?

Yes. Trust Models cover core evaluation metrics out of the box, and you can bring your own custom metrics, domain-specific evaluations, or third-party models alongside them. The platform supports both, so you're not locked into a single approach.

Why does the AI Trust Tax exist?

Most AI observability and evaluation platforms weren't built to run inside your environment. They route evaluation calls through external LLM APIs, which means every metric scored, every guardrail checked, every output evaluated generates a new API call. The costs of those calls show up in a separate bill from your foundation model provider, not from your AI observability vendor.

How do you eliminate the AI Trust Tax?

The Trust Tax disappears when evaluation runs inside your environment with no external API calls. That requires purpose-built, task-specific models deployed alongside your AI applications, not hosted by a third party and billed per call.

Fiddler Trust Models are batteries-included. They run in your environment, cover hallucination, safety, PII/PHI detection, toxicity, and jailbreak detection out of the box, and return results in under 100ms.