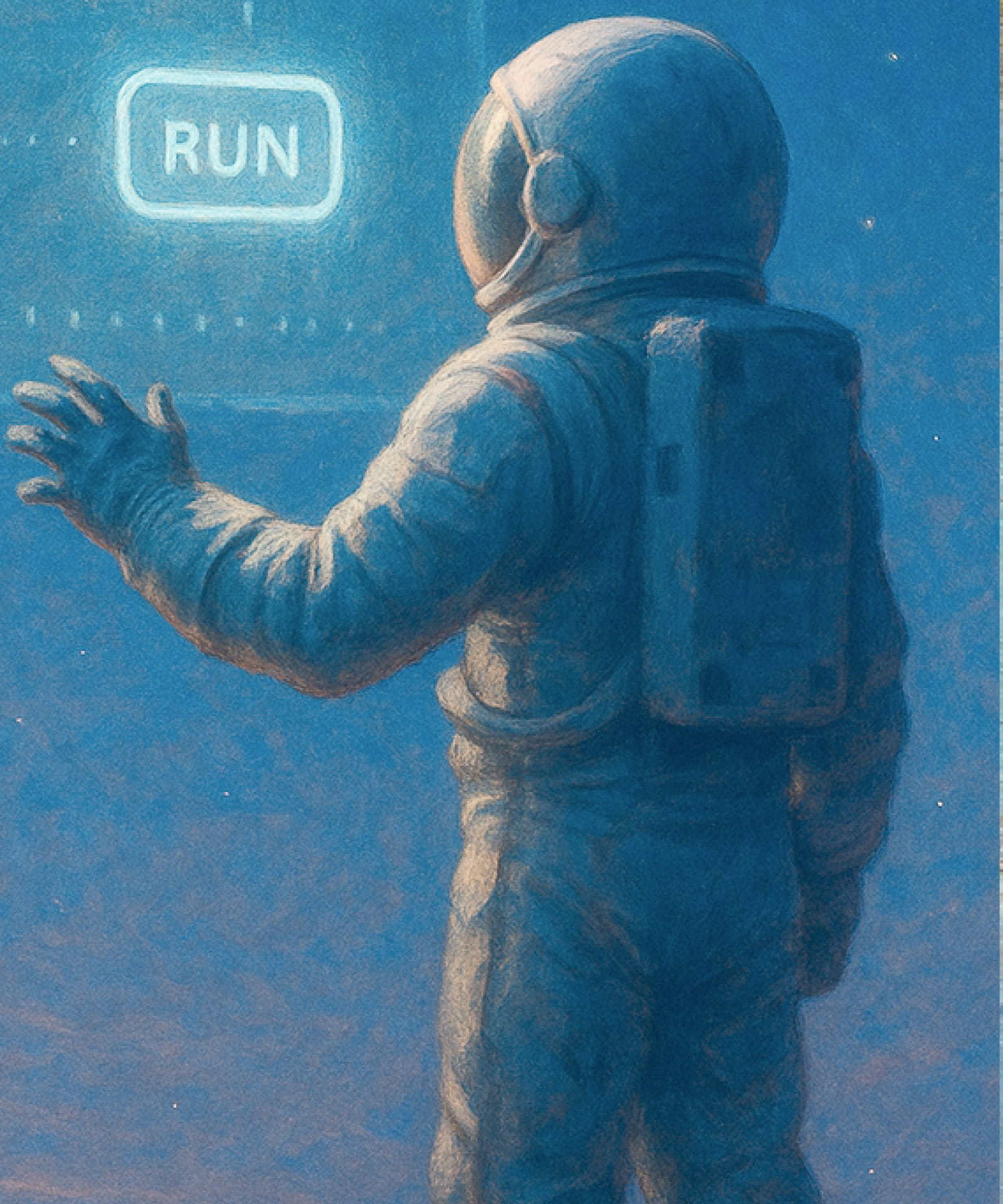

All Enterprise Agents Need the AI Control Plane

Experiments, monitoring, guardrails, and governance for compound AI.

Trusted by Industry Leaders and Developers

Enterprise Visibility, Context, and Control with Agentic Observability and Security

See Every Action, Understand Every Decision, and Control Every Outcome

Deliver High Performance. Protect from Risks. Maximize ROI.

Continuous monitoring and auditable governance unlike passive evaluation and open source systems.