Organizations are increasingly reliant on ML models to support their business goals, including demonstrating innovation, increasing productivity, and delighting customers. Accenture reports that nearly 75% of the world’s largest organizations they interviewed have already integrated AI into their business strategies and have reworked their cloud plans to achieve AI success.

With AI adoption on the rise, organizations also need to ensure that they follow responsible AI practices. Financial institutions like HSBC and Dankse Bank were involved in anti-money laundering scandals after their ML models failed to detect suspicious activities, and each had to pay heavy regulatory fines.

Enterprises that rely on ML models for operations and decision-making can minimize adverse risks and consequences, and prevent potential scandals with an effective model risk management (MRM) framework. Organizations from industries, such as banking, healthcare, and insurance, have dedicated MRM teams that have instituted model governance,controls, and MRM practices to assess model accuracy, identify model risks and bias, and check for model limitations.

Financial institutions, for example, need to follow the Federal Reserve and Office of the Comptroller of the Currency (OCC)’s SR 11-7: Model Risk Management guidance closely. In this guidance, financial institutions need to assess models for adverse consequences of decisions based on models that are incorrect or misused. Once potential risks are identified, MRM and compliance teams follow a series of model risk approaches to resolve them. Therefore, it is critical for ML teams in financial institutions and organizations in highly regulated industries to provide MRM reports to Legal, Risk, and Compliance teams for regular assessments.

We are excited to announce that the Fiddler Report Generator (FRoG) is now available to Fiddler customers who have cross-functional periodic reporting responsibilities to create custom reports for MRM and compliance reviews. FRoG extends the benefits of the Fiddler AI Observability platform enabling customers with model analytics to continuously review and pinpoint areas for model improvement while ensuring models are performant and fair, avoiding costly fines, and preserving brand equity.

Fiddler Report Generator for AI Risk and Governance

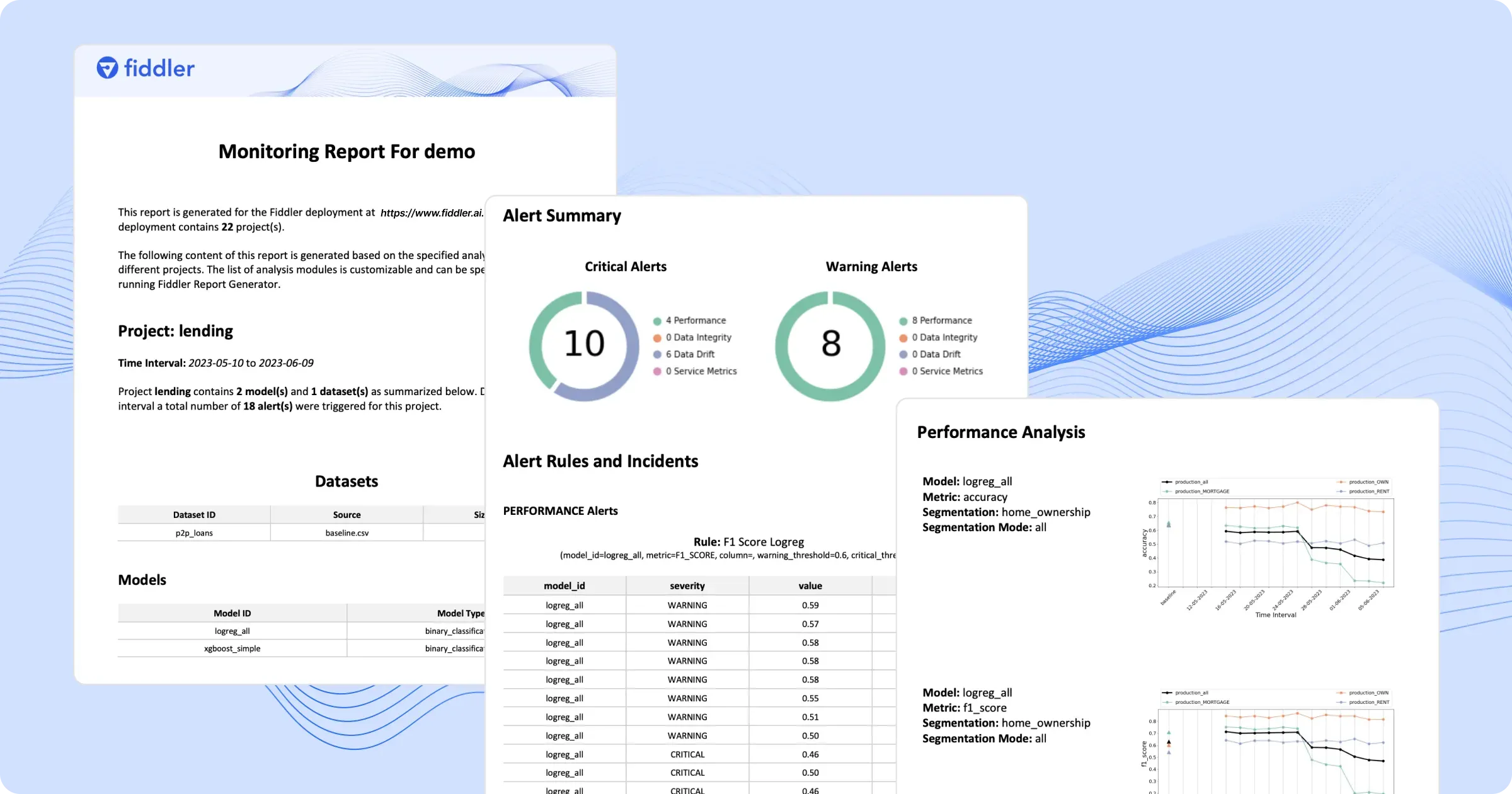

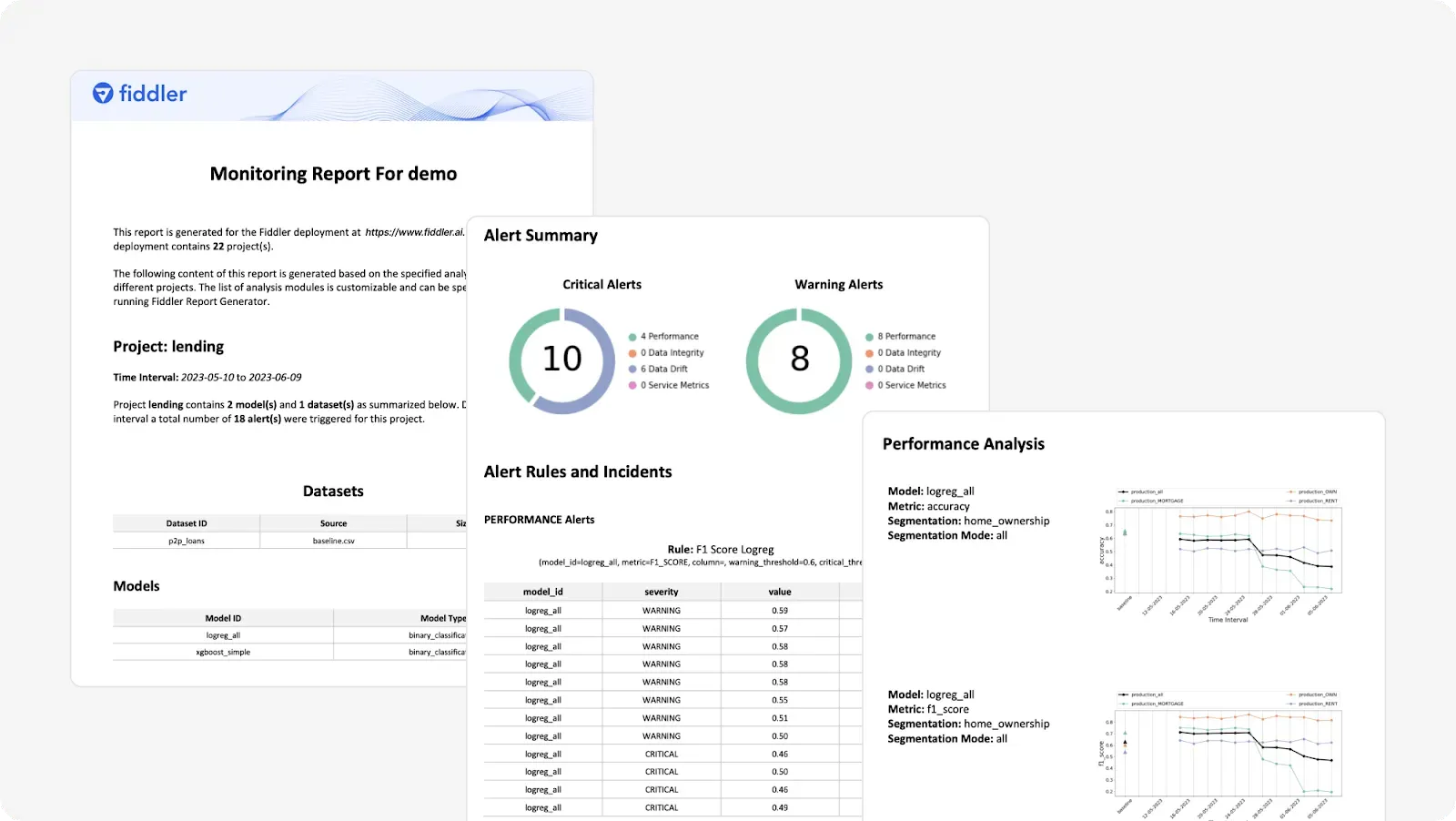

The Fiddler Report Generator is a stand-alone Python package that enables Fiddler users to create fully customizable reports for the models deployed on Fiddler. These reports can be downloaded in different formats (e.g. pdf and docx), and shared with teams for periodic reviews.

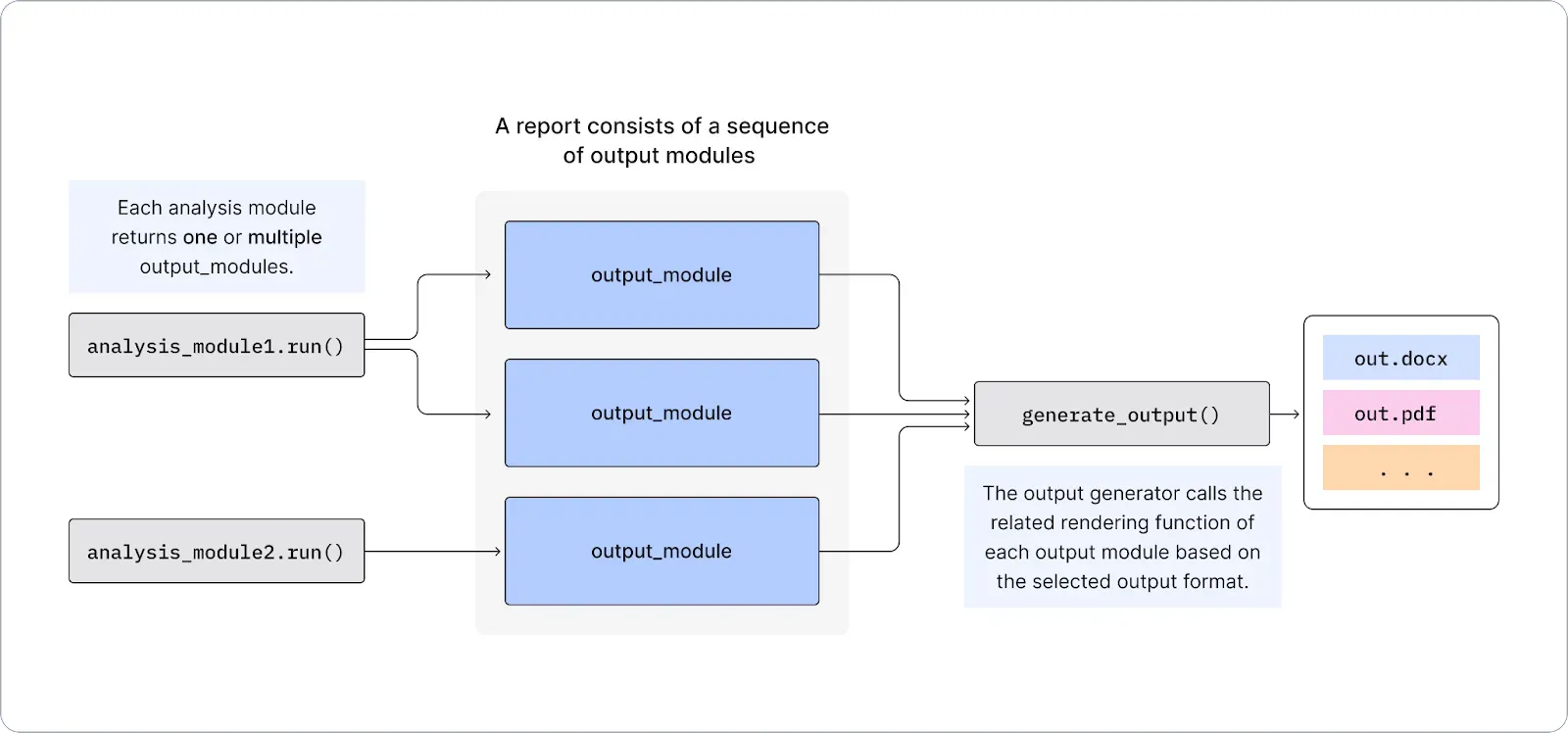

FRoG's modular design provides the flexibility to compose analysis modules and easily customize a report. The users have the flexibility to call different analysis modules, such as a monitoring chart or a performance summary, to create specific report components they need in a report. These analysis modules communicate with the Fiddler backend through the Fiddler client, and retrieve the necessary data sketches and calculated metrics needed to generate each report component.

FRoG reports show pertinent information for risk and compliance reviews, including:

- Project, model, and dataset summaries and statistics

- Model metrics: model performance, data drift, data quality, traffic

- Model performance time series with customizable metrics and data segmentations

- Confusion Matrix, Area Under the Curve (AUC), and Receiver Operating Characteristic curve (ROC) charts to assess and compare model performance

- Explainability charts to understand the reasoning behind model predictions including global feature impact/importance, and point-level explainability

- Failure case analysis to further investigate cases where the model was confident but incorrect

- Alert summary charts and details of alert incidents for each alert rule

ML teams can periodically share these reports with stakeholders throughout the ML lifecycle:

Model Validation in Pre-production:

It is critical for MRM and Compliance teams to validate models to perform as expected, in production. Model validation consists of two key elements:

- Evaluation of Conceptual Soundness: Assess the methods behind model design and quality

- Outcomes Analysis: Understand the reasoning behind decisions made by the model

Continuous Monitoring In-production:

Roll-up performance metrics by quarter and compare with train-time performance, and identify problem areas for model retraining to minimize model risks

Join our community to chat with our data science team to learn how you can use the Fiddler Report Generator to minimize risk and improve governance in your organization!