MLOps, or DevOps for ML, is a burgeoning enterprise area to help Data Science (DS) and IT teams accelerate the ML lifecycle of model development and deployment. Model training, the first step, is central to model development and now widely available on Jupyter Notebooks or with automated training (AutoML). But ML is not the easiest technology to deploy. This results in a majority of ML models never making it to production. This post focuses on the final hurdle to successful AI deployment and the last mile of MLOps: ML monitoring.

This blog post is a summary of our new whitepaper, The Rise of MLOps Monitoring. Download the full whitepaper here.

Evolution of Monitoring

Software development was historically a slow and tedious waterfall process that created disconnected and inconsistent solutions with homegrown or customized software. The growth of the web and the subsequent onset of cloud migration in the early 2000’s brought agile methodologies to the forefront due to the need for rapidly releasing software. Web technologies converged to a unified technology stack which set the stage for a new category - DevOps. DevOps enabled automation in every step of the application lifecycle, accelerating time to market considerably.

Monitoring, in particular, changed significantly with the migration from bespoke data centers to the cloud with consistent standards. Real-time streaming data, historical replay, and great visualization tools are standard capabilities in monitoring solutions today. The popular products offer not just insights into simple statistics but also have API and component integrations that are business-ready.

There are several benefits to DevOps monitoring: it offers broad visibility across all aspects of software and hardware deployment, increased observability that aides in debugging support and better understanding of root causes, establishment of clear ownership of roles and responsibilities between engineering and DevOps, and greater iteration speed due to a streamlined process with clear stakeholders and the availability of monitoring tools.

ML Monitoring is at a Similar Turning Point

AI is being increasingly adopted across industries with businesses expected to double their spending in AI systems from a projected $35.8 billion in 2019 to $79.2 billion by 2022. However, the complexity of deploying ML has hindered the success of AI systems. MLOps - and specifically the productionizing of ML models - come with challenges similar to those that plagued software prior to the arrival of DevOps Monitoring - lack of problem visibility, lack of observability, no clear teams, and slow iteration.

DevOps for ML Monitoring is Missing

MLOps Monitoring is needed to solve these challenges. ML models are unique software entities - as compared to traditional code - and are trained for high performance on repeatable tasks using historical examples. Their performance can fluctuate over time due to changes in the data input into the model after deployment. So, successful AI deployments require continuous ML monitoring to revalidate their business value on an ongoing basis.

Because ML models have nuanced issues specific to machine learning, traditional monitoring products were not designed to take on this challenge.

There are 7 key challenges to solving MLOps issues:

- Identifying performance issues without real-time labels: When models are trained on a data set, practitioners use ‘ground truth’ or labels to measure the model’s accuracy and other metrics. In the absence of real-time labels, model metrics cannot be calculated to assess real-time model performance.

- Identifying data issues and threats: Modern models are increasingly driven by complex feature pipelines and automated workflows that involve dynamic data that goes through several transformations - with so many moving parts, it’s not unusual for data inconsistencies and errors to chip away at model performance over time, unnoticed.

- ML models are black boxes: the black-box nature of ML models makes them especially difficult to understand and debug for ML practitioners, especially in the context of a production environment.

- Calculating live performance metrics: Recording a model decision or outcome can help give directional insights. To do this, the labels or outcomes have to be ingested, then correlated with the predictions and finally compared with a historical time window. Metrics tracked in analytics tools typically trail real-time, meaning information comes too late to be actionable.

- Bias: Since ML models capture relationships from training data, it's likely that they propagate or amplify existing data bias or maybe even introduce new bias. Calculating real-time bias after model release, and analyzing bias issues in context, are time-intensive and error-prone.

- Back testing and champion-challenger: When deploying a new model, teams either run historical data through the new model or run the new model side by side with the older model, otherwise known as champion-challenger. Both approaches can be error-prone and slow.

- Auto retraining: A common solution to problems in a deployed model is to train another model with the same input parameters but with new data. This is time-intensive - an easier approach is to have a system that automatically selects a new sample from live data, trains the model with the same training code, and redeploys it - all without needing any supervision.

Explainable Monitoring to solve MLOps issues

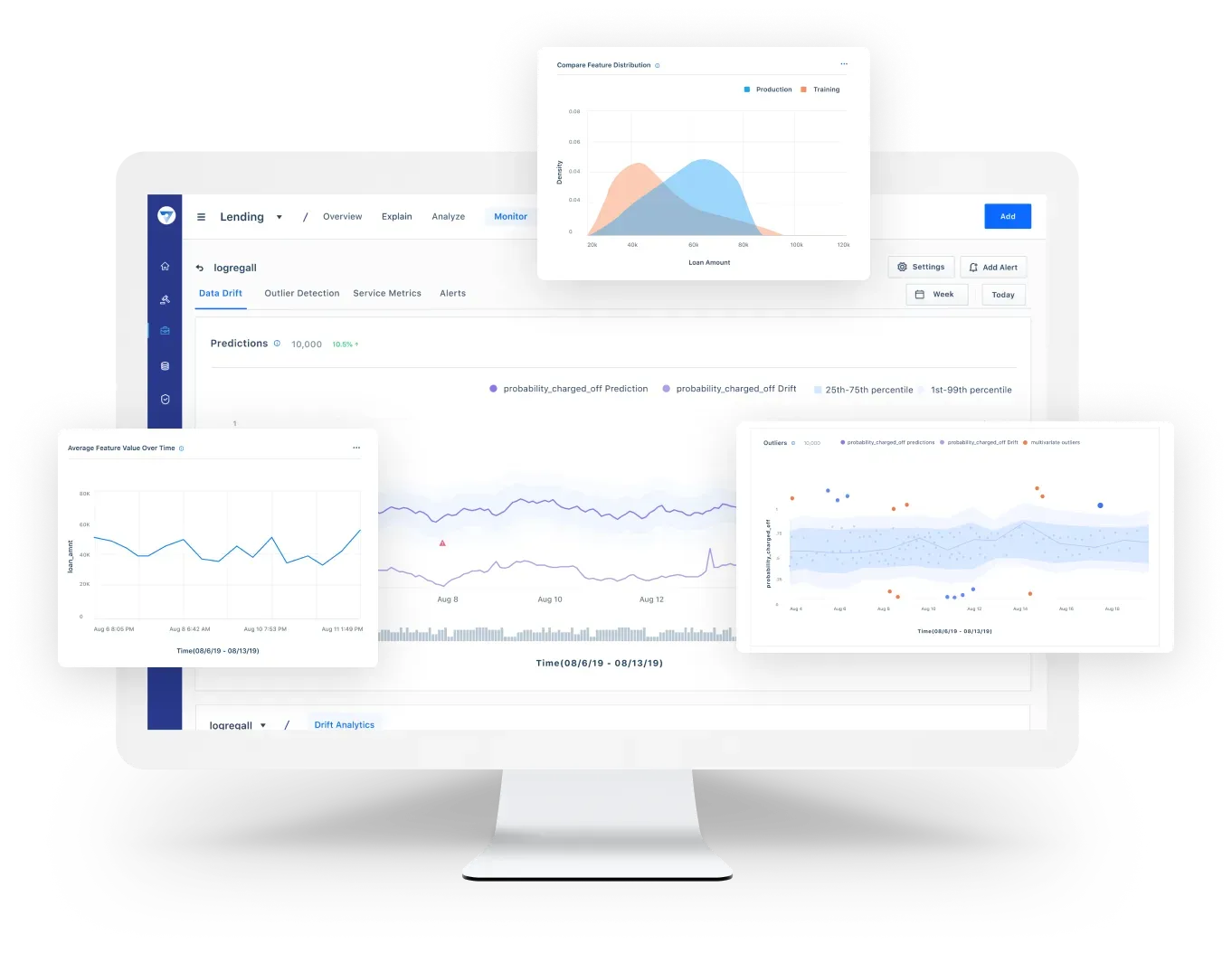

These challenges point to a critical need for a solution to oversee an ML deployment to not only surface operational issues but also, and more importantly, to help understand the problems behind these issues. MLOps monitoring solutions are needed to accelerate the continued adoption of AI across businesses by enabling teams to streamline ML model deployments with real-time visibility and fast remediation.

Download the full whitepaper to dive deeper into these MLOps challenges and learn how Fiddler uses Explainable Monitoring to solve MLOps issues.