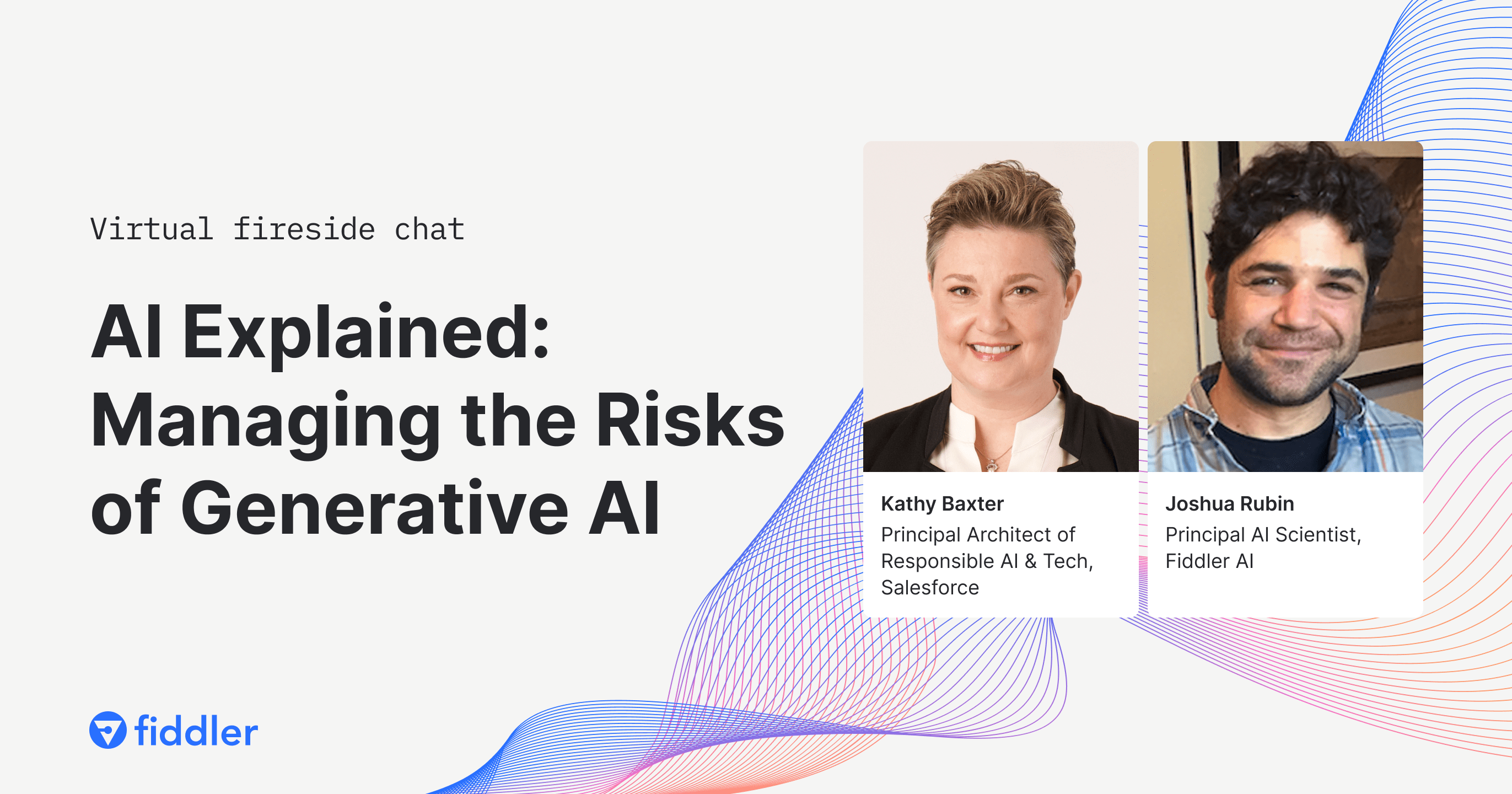

AI Explained: Managing the Risks of Generative AI

January 18, 2024

10AM PT / 1PM ET

Registration is now closed. Please check back later for the recording.

Generative AI has become widely popular with organizations finding ways to drive innovation and business growth. The adoption of generative AI, however, remains low due to ethical implications and unintended consequences that negatively impact the organization and its consumers. Kathy Baxter, Principal Architect of Responsible AI & Tech at Salesforce, will discuss ethical AI practices organizations can follow to minimize potential harms and maximize the social benefits of AI.

Watch this AI Explained to learn:

- Ethical AI practices and roadmap for building the infrastructure for ethical AI development

- Tactical tips to safely integrate generative AI into applications

- Human-in-the-loop practices for continued oversight, testing, and improvement of AI applications

AI Explained is our AMA series featuring experts on the most pressing issues facing AI and ML teams.

Featured Speakers

Kathy Baxter

Principal Architect of Responsible AI & Tech

at

Salesforce

Kathy develops research-informed best practice to educate Salesforce employees, customers, and the industry on the development of responsible AI. She is a member of Singapore’s Advisory Council on the Ethical Use of AI and Data, Visiting AI Fellow at NIST, and on the Board of EqualAI. Prior to Salesforce, she worked at Google, eBay, and Oracle in User Experience Research. She received her MS in Engineering Psychology and BS in Applied Psychology from the Georgia Institute of Technology.

Joshua Rubin

Head of AI Science

at

Fiddler AI

Joshua Rubin is Head of AI Science at Fiddler AI, an enterprise AI Observability company. He’s built and led a data science team that developed novel explainability tools for computer vision and multimodal deep-learning models, and techniques for measuring model robustness and drift in unstructured data, key components of Fiddler's LLM observability product. Most recently he's been developing small BERT-scale models to close the feedback loop on measuring large language model performance, serving customers including cloud-native travel platforms, large financial services firms, ad-tech companies, and cryptocurrency exchanges.