Generative AI Meets Responsible AI: Innovating with Generative AI

Generative AI is being used to innovate and improve processes across industries, from drug discovery to work automation.

Watch this panel session on Innovating with Generative AI to learn how:

- Engineering teams enhance their productivity using generative AI

- IBM is leveraging generative AI models to advance medical innovation

- Generative AI is driving innovation across domains

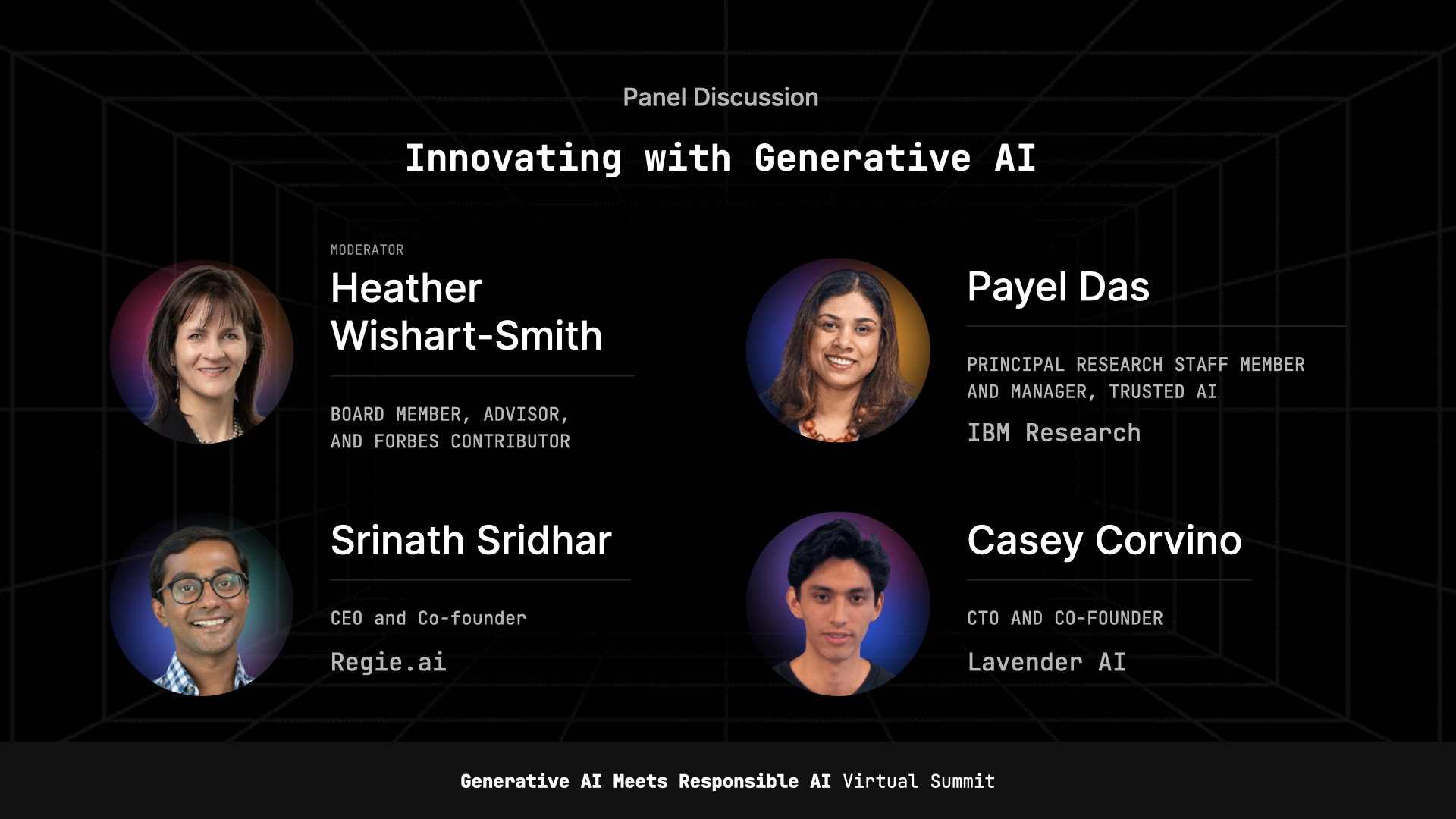

Moderator: Heather Wishart-Smith, Board Member, Advisor, and Forbes Contributor

Panelists:

- Payel Das, Principal Research Staff Member and Manager, Trusted AI, IBM Research

- Srinath Sridhar, CEO and Co-founder, Regie.ai

- Casey Corvino, CTO and Co-founder, Lavender AI

Mary Reagan (00:07):

So next up we have a panel discussion on innovating with generative AI. So, and with that I'm gonna introduce our moderator, which is Heather Wishart-Smith. I hope will be here and if not, we can bring Krishna back and chat, chat a little bit longer. Let's see.

Heather Wishart-Smith (00:27):

I'm here. Can you hear me, Mary?

Mary Reagan (00:28):

Wonderful. There you are, Heather. Hi. Nice to see you again. You as well. So Heather is an innovation strategist and a business leader who provides advisory and consulting services to corporations, startups, and venture capital funds. She has extensive experience in managing large engine engineering, consulting and professional services practices, including places like Jacobs. She has held several other notable leadership roles. She's a formal US Navy Civil Engineer and a regular contributor to Forbes. She holds a master's and bachelor's degree in Civil Engineering from the University of Virginia. And with that, I'm gonna turn it over to you.

Heather Wishart-Smith (01:11):

Thank you so much, Mary. Thanks so much to all of our panelists. I am going to go through a brief introduction to each of them, and then we'll jump right into the panel. So, beginning with Payel Das, Dr. Das is a Principal Research Staff Member and IBM master inventor, and a Manager at IBM's Research Artificial Intelligence Research Center. Her research includes statistical physics, trustworthy machine learning, neuro and physics AI and machine creativity. And she has co-authored over 45 peer-reviewed publications and several patent disclosures. And she's given dozens of talks and has, has received several awards. So we're delighted for Dr. Das to join us. She is joined by Srinath Sridhar, who is the CEO and Co-founder of Regie.ai, it is a generative AI platform for enterprise sales team that personalizes content using data that's unique to their business.

Dr.Sridhar's PhD dissertation was cited by Science Magazine's Breakthrough of the Year in 2007, and he was a founding engineer of Bloomreach, as well as one of the first 100 engineers of Facebook. Bloomreach is an AI marketing tech startup. And he also co-founded Onera, which was a venture-backed AI supply chain startup that had a successful exit. He also co-founded Regie.ai in 2020 when GPT-3 came out in private beta. So thank you to Dr. Sridhar for joining us as well. And then last but not least, Casey Corvino. He is the CTO and Co-founder of Lavender. There he is responsible for product and engineering. Lavender is an AI powered sales email coaching platform. It has over 20,000 active users and very regularly known customers like Twilio, Sendoso, Clari, and Sharebite. Casey was an early adopter of large language models like ChatGPT, and he takes a practical approach to applying AI to solving costly communications problems. Prior to Lavender, he was the CTO of an early stage fashion PR automation startup after graduating early from NYU in just three years. So, welcome and thank you to all of our panelists. I'm going to jump right in and start out with Payel, whose research group is using generative AI at IBM for a variety of tasks. So, Dr. Das, could you provide an overview of just how you're using it?

Payel Das (03:47):

Well before we even use it? So, my team, we at IBM, we are part of the trust toward the machine intelligence team. So we work on the science aspect of generative AI models. Like, you know, what is the best algorithm to make them or what are the theories, some of the theories behind generative AI models, the methods to implement them in more green manner, you know, more trustworthy manner as well as how to evaluate them. So we work at this, you know, whole life, entire lifecycle of generative AI models from their conceptualization to all the way up to their users for many different domains, many different enterprise as well as scientific applications. So when we talk about enterprise application, you know, you can imagine that how can we use a chatbot in some of the enterprise application where there are guards, uh and guardrails, right?

On the scientific side, we are working with many eminent, you know, academic and industry partners on how we can apply generative AI to find the best drug, find the next drug for the next fight, as that's gonna attack the society. So these are some of, yeah, and we are also working with a number of banks on the financial transaction services. And as you can imagine, all of this regulated industry do have a lot of rules and regulation in terms of data sharing. So one way we are bringing generative AI there is to how can we create trust toward the synthetic data using this model that can be then used to train the downstream AI models for defined task, different applications,

Heather Wishart-Smith (05:23):

Exciting applications. Thank you so much. I'll turn that same question then to Srinath. So Dr. Sridhar, could you please tell us a little bit about how you're using it at your company?

Srinath Sridhar (05:36):

Yeah, I'll keep it super brief. So, you know, we wanted to build the generative AI platform for sales teams. So think ChatGPT, but not a chat interface, an interface that's actually useful for sales teams. So that's what Regie does. So if you think back at sales teams, they need to create content in three different manners. First is they usually build content in sales sequences. So these are emails that go into automation platforms like Outreach, SalesLoft, HubSpot, Marketo, and so on. So Regie asks a series of questions, then helps you build out the sequence that a user can, an SDR manager or sales team manager can actually approve. The second is to build and store sales collateral. So things like case studies, testimonials, customer proof, wines, and so on. And lastly, personalization. So I have all of this data, but how do I write this into an email that a prospect will actually understand and make sense of for their use case? So I'm selling Fiddler, but how do I make Fiddler make sense for Regie as an example? So those are the three places that Regie operates out of. So we try to do personalization in, you know, seconds and sales sequences in minutes and personal specific content for personalization at scale and prospect specific content for personalization at point.

Heather Wishart-Smith (07:00):

Terrific, thank you. I'll turn that same question then to Casey. How are you, can you describe how you're using generative AI at Lavender, please?

Casey Corvino (07:08):

Awesome. Well, first off, thanks for having me on this, this panel. I'm really excited to be with, with this great panel and to talk about some of this cool stuff we're working on. First off, Lavender is not a generative AI company. We are a communication intelligence company. So what that really means in our use case is how can we sort of look at, in our use case, emails, all these millions of emails we're looking at and identify what works best and what doesn't. So for example, it could be something as simple as what's the best first word? What's the best complexity of the email, the reading level formality, et cetera. And sort of before GPT years ago, this product was really built to sort of help that junior SDR rep, someone that's coming straight from college, doesn't really know how to write a great email.

And our tools to come in, give them all these recommendations as they're, they're writing their emails and really help them optimize it for outcomes. Now with the rise of ChatGPT, it's changing a little bit. So before we were sort of teaching the SDR how to write a better email now we're sort of teaching the computer or GPT how to write a better email. So that's one of the ways we're really thinking about it more specifically, we're applying that to, to research. So using GPT to, to the same way we were teaching the SDR and how to research their recipient to personalized email. Very similar. We're not teaching GPT on one of the best ways to research an emails were to get them started. Also the obvious one, drafting emails and then more keen to our intelligence layer using our intelligence to sort of help GPT revise those emails.

Heather Wishart-Smith (08:33):

Very good, thank you. I'm gonna stick with you then and, and, and ask you to address, what do you feel are some of the biggest technical challenges associated with generative AI systems and how do you approach solving those challenges?

Casey Corvino (08:45):

Yeah, great question. So on that note of the intelligence layer, we've really done our science and our data science obviously, to figure out what is the best email. So now we need to use that in sort of, for lack of a better word, trick GPT to do those best practices. A good example would be something like a complex sentence, right? It's something that really hurts your reply rates in sending an email. So how do we now get GPT to make a complex sentence not complex specifically just telling GPT "Hey, make this sentence shorter" doesn't really work well. So it took a lot of, lot of, we had take a step back and sort of reevaluate how we're thinking about this, and what we found was really understanding the linguistics and sort of the semantic knowledge of what makes the sentence complex really helped us.

And that sort of goes to the fact of, like, for example, something that's a very normal thing for a human to say is "running late to a meeting." That's a very natural way of saying it, but GPT being the computer and being sophisticated in using proper grammar, is gonna say something like, "I am running late," which sounds a bit more robotic. So sort of having a way to sort of figure out what's causing these to be exactly complex and then telling GPT very specific commands to, again, for lack of a better word, trick it to actually do what we want it to do.

Heather Wishart-Smith (09:57):

Very good. All right, Srinath, I'm gonna switch to you now. So how do you see the landscape of generative AI tools today and how do you think that landscape will evolve?

Srinath Sridhar (10:10):

Yeah, great question. So I'll keep it genetic so that it's useful for everybody here. So I see the landscape as having four different players. The first big player I think are the foundational models players. So this includes Google, Microsoft, obviously OpenAI, stability and so on. So these are the people who are actually building the tech. Then the second group, which is the group that's most talked about are horizontal players. So this includes Jasper, CopyAI, Writesonic simplified and hundreds of companies in that space. Then there are the vertical players. So this includes Lavender as an example, and Regie and the work that Payel's doing on IBM. So we will have very specific verticals where we will actually build out, generate AI applications. We'll definitely see stuff in patent law as an example.

I mean, just get a bunch of inputs and write a patent. and legal is a huge one. I'm pretty excited about doing pull requests. I'm waiting for the day when I can actually describe the pull request. And GitHub automatically writes the and sends the pull request for code reviews, and so on. I think the excitement, I'm particularly excited on the vertical slide. And then the last piece is you have a fourth player, which is the platform players, and these include not just Google and Microsoft, but Adobe, Notion and so on. So those are the four big groups that I can think of. So starting from foundations, horizontal players, vertical players, and and platform players. And I think what will end up happening is, first question, biggest question on the foundational players is, is it winner takes most like search or is it gonna be a contested field for a long time?

Is Microsoft and Google gonna contest against well OpenAI, Microsoft has exclusive access, but maybe Amazon gets in the picture as well. So that's not clear how that will all shape up horizontal. It's pretty clear to me that we'll see a massive compression and probably there'll be a couple of companies that break out on the vertical. I'm hoping that there'll be a couple of companies that break out on each vertical. Hopefully that's lavender and us on sales, but we'll have the same thing on legal healthcare, so on. And then there are interesting implications as to why the foundational model players, the bucket one actually overlaps with bucket four, which is the platform players. There's no need for that overlap, but that has, that overlap in itself has a lot of ethical implications. Because Microsoft now controls both the platforms and the models, Google controls both the platform and the models. That's no reason that should be the case. It's just coincidental. And that has some interesting implications there as well.

Heather Wishart-Smith (13:03):

Thank you, Payel. I'm gonna ship over to you. I found it really interesting that you've got this integration of drug discovery and that healthcare side of things. So could you describe how generative AI is being used in scientific tasks such as drug discovery, and then what makes it such a powerful tool for this application?

Payel Das (13:25):

Yeah, so drug scientific task as such as drug discovery is a great example where the space of possibilities is huge, right? You know, like it's said that the number of molecules possible are 10 to the power 100. But if we look at the part of the landscape that has been human annotated characterized, it's only in 10 to the power 4 or 5, right? So there is this big gap between what we know and what can happen, what could possibly happen. So, and there is this big gap between the amount of labels we have and you know, the data possible. And that's what generative AI comes into play, that we can now have powerful foundation models that are being used for generative and also predictive purpose to tell us that what a new entity in that space can look like and do like.

And if we are given certain specifics, right? For drug discovery, it's a very complicated process. It takes more than a decade to come up with a new drug and it costs more than a billion. And most of that money goes into failure testing, right? So basically you test at each stage of this process, many, many, many different areas, sometime from 10,000 to get to one, and often time you fail and you don't find that one, right? So what we are trying to do here is to explore generative AI models in a very controlled setting. What I mean that, you know, that we have this guardrails that a drug has to, has to fit certain characteristics, right? It cannot be toxic in your body, it cannot be too costly. It should be orally taken, it should, you know, clear out from your body very easily, very fast, right?

It should have certain halftime in your body to act. And then of course, all of this targeted pathway. So it's a very con, if you can imagine a very controlled process that's very complicated and has a lot of downstream implication. This molecule discovery, your drug discovery is one of that. And what we are trying to do here at IBM is to come up with algorithmic innovation. So we can put controls or guardrails on this model that first of all, it narrows down to the list of candidates that are high probability to be successful in terms of, you know, when they get tested, tested meaning tested in weight lab, they get made and they get tested against the rough target, right? And also to, at the same time, to ensure that the chance of failure or chance of generating or testing a risk candidate that we minimize at the same time.

So that's the challenge here. And because Srinath brought up this platform question. So we are trying to, at IBM, we are working not just on the algorithmic side, but we are working on this service, the foundation model as a service platform that goes all the way from the hardware to the middleware, to the software, to the interactive aspect with the end user. And we are working with the critical partners in the space, in the drug discovery space as well, where we are gonna work with them to create more proof points of this technology. It's very difficult, you know, for anyone to go, I know, generate something with this AI models a new molecule, and then synthesize and test. And we are working that with academic as well as industry partners. So we make sure that we do evaluate these models before we go forward.

For example, we have actually created antibiotics and antivirals that we have tested in collaboration with experimental labs. And tho those were generated solely by generative AI models. And we in collaboration with experimental lab, we made them and we tested them. And it seems like, you know, in weeks we can get to good compounds, good molecules, you know, that do, that can be made, you know, synthesis is a big thing that can be made and that will, that also works, right? You know, that, for example, we found molecules that can stop sar scope two from replicating.

Heather Wishart-Smith (17:21):

So very good, very good, thank you. Well, I'm going to jump next to some of the audience Q&A. And I, before I do, I'd like to remind everyone that you can join the Fiddler Slack channel to have an all day Q&A session with the data science team. But I'll start out with the first question that was posed from an anonymous attendee that according to OpenAI, they don't store the input data when you use the API, which is not true when you use a ChatGPT. So how do you think Microsoft will deal with it when users will start loading business documents? But any of our three panelists have a particular opinion on this. Want to jump in?

Srinath Sridhar (18:02):

I think Krishna covered this a little bit, Heather, so we can probably jump to the next one.

Heather Wishart-Smith (18:07):

Okay, very, very good. I'm sorry, I, I missed that one. So I'll jump into Renata's question. I know Srinath, you had offered to answer that one. So Renata Barreto had asked that OpenAI's GPT is used for content moderation practices as well. Since we don't have access to the training data, how can we carry out bias audits for these models?

Srinath Sridhar (18:28):

Yeah, great question. And the reason I mentioned this is we were founded in 2020 and that was the year of George Floyd. And so right from the beginning we had an angle to how do we think about this even in the early stages. So what we came up with is a list of categories that we look at. And so everybody can try to make sense of it and ask the same question for themselves, hopefully academia, or that there are some standards that come in and that can actually solve this problem for us. But at least when we thought about it, and Krishna briefly mentioned this, but at least we put some thought into it. So I'll list them all out and hopefully that is useful in itself for people. age, appearance and behavior is one category of things that we look at.

Food security, socioeconomic status is one category. Gender neutrality is one category, mental health, global health care and disability is one. Politics, geopolitics, and legal is the fifth one. Race, ethnicity, nationality, and religion is sixth. Science and the environment is seventh. Sex, gender, and intersectionality is the eighth. We also have a category for K-12 education, as well as a category for talent management inside companies. So these are ten categories, eight of the main ones. And then we have K-12 and, and talent management. Underneath each of these categories, we have about 50 biases that we look for. These are also societal issues. Basically includes everything from homophobia to party culture, fat shaming, triggering language aggression, and so on. So we have this full database that we look at to try to see if there are biases.

Now, half the country is going to say, that's fantastic, I love you. Half the country is gonna say you are, you are evil. And I'm not here to preach one direction or another, but I think the very simply, we can at least understand, at the very least, I think the whole country can agree that these are sensitive issues. And these are contentious issues because it's factual. So when someone writes content on Regie, we look at these categories and we look at these 50 biases against those 8 to 10 categories. So whenever you're building your own system, at least for now, you can come up with your own checks here, and hopefully that gets a, becomes a standardized process that academia or a nonprofit comes out that all of us can rely on for this.

Heather Wishart-Smith (20:50):

Very good. Thank you. Casey, I'm going to come to you next for one of the audience questions from Arun Chandrasekaran. And Arun asked, do you believe enterprise organizations will need to create their own "red teams" similar to the red teaming approach of companies that develop as they develop foundation models? And if so, what would be the kind of adversarial testing they need to do?

Casey Corvino (21:14):

Yeah, I think totally. There's gonna be a lot of, a lot of testing on these models regardless, right? For a lot of the stuff that obviously Srinathhe mentioned the biases, et cetera, but also accuracy. There's gonna be a million of those sort of popping up. But yes, it is a little bit hard to test these models just because of the nature of how they're, of how they're built, right? We can't get look into the corpus, for example, just remove things. So we, there will be red teams for sure, but how those look, I think is still trying to be determined. Internally here, how we sort of benchmark a lot of these is I specifically on sort of the bias aspect too. But we try and break it, right? We try and we try and split things to this sort of complicated and sort of lean into biases, et cetera. And with that, a lot of it is manual, that, that's the grind right now is a lot of that testing is a bit of a manual layout because of the way GPT's set up. We obviously automate as much as we can, but for a lot of the times it's really just going in and sort of figuring out where some quirks are and how we can change our product op to overcome those.

Heather Wishart-Smith (22:08):

Very good. Thank you Payel. I'll switch to you now. We've got a question from James Farris. What's to prevent a developer from creating irresponsible AI? So AI that would be unsafe, biased, unhygienic, inaccurate, that sort of thing, and that could cause humans harm. In other words, how do we as humans control AI?

Payel Das (22:28):

Yeah, so I would say that three dimension: trust, ethics, and transparency. So these three are tied to the best practice. We have been working on this what we call as Trust 360 at IBM where we decompose this, what do we mean by trust? You know, and trust has many dimension, right? It is interpretability, robustness, causality, uncertainty and so on. So if we have access, we can break down this big problem of trust. And once when a developer is building this AI model, he can score on each of these different, he or she can score on each of these different trust dimension. He can create something like a factsheet or a model card, and it'll tell the audience or tell the end user what is going in the model, how the model has been tested. Like typically when you buy medicine from drugstore, right, it has an RX label and it has a lot of details that creates this value that's con this confidence in you that what I am taking, I know at least a bit of it, right?

And then the point of ethics as I'm creating or some our developer is creating a model, there are certain associational norm that we need to follow that changes from application to application. Each enterprise has different ethical guideline. So as long as the model is abiding to those guidelines, that's the way to go about it. Also we heard about retiming, the retiming scenario is gonna change for each enterprise as we are talking about doing this adversarial attacks to find where, where this model breaks when we know the rules or the guardrails. So going back to the developer question, right? We need to know that if you know the dimension and if you know the how to measure them, then first thing is that as you are developing a model, ensure the trust region for that model and make sure your model is within that.

Heather Wishart-Smith (24:17):

Very good. Thank you. And related to that, Steven Levine has a question about how concerned are we about the huge problem of these powerful language models not having any values built into them, the challenge of coding human-based ethics. So many people are concerned that there will be this backlash to AI if most engineers are expecting someone else to fix this monumental problem. Kind of a black box, mostly unsupervised, Casey or Srinath. Do you have any anything you'd like to add to Payel's response there?

Casey Corvino (24:45):

Yeah, I have one thing, and I think that's where the chat infrastructure can really help with, right? Is because it gives that, that really good UX of being able to adjust it, right? So if something's written with a different tone that you don't want, you can obviously use the chat infrastructure to that really human in the loop experience to sort of revise it, right? And give it your own voice. specifically obviously ChatGPT, it's very, it's robotic, right? So it usually has a very neutral tone, but that's not your tone. It's not in aligned with you. You feel like it's not inclusive, cause it's, it's not your tone. You can then use that chat UX to sort of go about and, and make those changes yourself. So it does give the user some control in those aspects.

Heather Wishart-Smith (25:21):

Anything to add Srinath?

Srinath Sridhar (25:22):

Yeah, quick quick data point because I talked at length about the bias, how and how we detect. So we consciously made a choice to do that manually. So we actually got a team of people to do that completely manually. So this was not automated. We had people who had disabilities curate sections on disability. We had people that on the world curate sections on you know, race and ethnicity and national origin. So to the question, you know, that's why we're trying to be responsible in the sense that we are not letting somebody else solve the problem. We're doing the best effort while being a for-profit to solve the problem. But I do hope that is a non-profit or academia comes out with a solution that everybody finds acceptable.

Heather Wishart-Smith (26:11):

Terrific. Thanks. So Amelia Taylor, Taylor has a question for Casey on the intelligence layer and asking what's the best email is great. She's curious how email deliverability plays into creating the best email using best practices and then leveraging AI to simplify. Is it something you're seeing improve or a hurdle to overcome as you're revising emails, drafting them, that sort of thing?

Casey Corvino (26:34):

Yeah, so email deliverability, we have a lot of in-house models that sort of detect the, the content of the email, obviously to see if there are certain spam triggers, right? This is a, this is obviously a, an ML model that keeps growing and learning, so it advances over time with that. What's really interesting in this space of deliverability though, is in our use case, the way we we leverage AI again, is to assist the user not write the whole email for them. So because of that it doesn't have like, like for example, if you use ChatGPT to write email from scratch, it'll like basically use a lot of like spam triggers, right? It'll use things like promotional offers or spam words even like, I hope this finds you well or sort of spam cliches and those affect the deliverability. So because we have that very human in the loop approach, it's still mostly written by the human with the assistance of the computer, it's less, less likely to have those spam triggers.

Not to mention thinking about this in the bigger picture, in the future generated like fully generated emails are most likely gonna be flagged to spam. We've already saw OpenAI releasing their GPT text detection algorithm where they can add a text if a piece of text is written by a computer. Outlook has said that they, they, they're planning on adding some of those features into their product as well to to flag an email if, if it's written by a robot. So we're starting to see a big shift now from like, I guess our approach currently of detecting spam words and spam triggers with our models and building off of that, but also on in the future, thinking about how they're gonna add even more to the spam layer with the rise of degenerative email tools.

Heather Wishart-Smith (28:01):

Very good. Payel, I'd like to go a little bit deeper into your response about drug discovery and what makes it a powerful tool and talk a bit about personalized medicine, which of course has become more popular and more adopted recently. How do you see generative AI being used in personalized medicine and how do you think it could be used to development, develop treatments that are tailored to specific individual patients?

Payel Das (28:29):

So if you recall that, you know, the way we are developing this generative AI model is not just that they can do free form generation, but we can do generation in a very controlled manner, right? Controlling the aspect of downstream toxicity, you know, controlling the cost of synthesizing and of, of course the activity potency. So if we take a step further, if we do have those controls in terms of how individuals gonna react to certain candidate being administered, what is his or her family history, right? How he has been reacted to past treatments and the disease progression. So if we have those parameters, those could be also imposed as a control on this AI models and that will allow us to know fine tune the model to the personalized reference of this particular person. So that's the way to go. And that's very generic, right? That's very general. That can be also applied if I'm trying to create a chatbot that will act like me. Like, you know, if I, if we are trying to impose a persona. So the same thing here. If we do have availability of those data, of personal preferences and personal history, then we will be modeling those as control in our algorithm to guide the genetic model.

Heather Wishart-Smith (29:42):

Terrific, thank you. So going back to the the audience question, we've got a question from Shivaram Lakkaraju in terms of how soon will industry-wide standards or frameworks emerge and get adopted to govern the development and implementation of responsible generative AI? Anyone want to jump in there?

Srinath Sridhar (30:07):

I expect the foundational models to move faster here than the industry-based standards. So for example, similar to what Casey was saying if OpenAI decides to say that, and for the most part they do already, which is to say that if any piece of content will actually get to an end user, then the end user needs to have an ability to either flag the content as bad or not. And if you enforce that sort of stuff, then you know, they are the gating factor. So they can already set standards for all the downstream usage. So I do expect the foundational models guys to move faster there and they can easily set standards because everybody else depends on them. And that'll just percolate pretty easily to industry wide. But I think like GDPR and SOC2 and so on, we will end up with newer standards as well for sure, but might more longer.

Heather Wishart-Smith (31:12):

Thank you Srinath. Casey, how do you see generative AI impacting engineering departments?

Casey Corvino (31:19):

Yeah, so I think there's three main ways. I think the first most obvious one is using internally to, to code, right? To be honest, I think it's still not quite there yet. I still prefer just coding myself. I think it's a little bit faster than actually using GPT and revising it, but I think it's great for commenting, great for getting over code block, right? When you're stuck on something and you can sort of get a draft of what you want to create or get some ideas the same way we obviously look at drafting emails with it, we can sort of draft code with it, right? And then the human picks it up and goes from there. So we see it great in all those aspects. Secondly, going back to my point about our complex sentence transformers. We're seeing a huge push now to hire a new kind of role in the engineering departments, a linguistic expert, someone that is, understands language and as opposed to understanding the underlying model, right?

And only have that topical approach of what defined the sentence, et cetera. And so a new position that's emerging is a, the prompt engineer, right? A good example of that obviously being like GPT 3.5 to 4 was all benchmarked off of sort of how, how correct the system is not really benchmarked on how human it sounds, right? Or in our use case, obviously how good of an email it is, right? So linguistic expert can really apply that knowledge plus with a GPT-4 to create really high performing prompts. and secondly, short related, sorry. Lastly and related to that and what I think is the biggest change in engineering departments is again, sort of how linguistics is, is sort of in a way replacing a lot of these machine learning generative AI engineers is we're really seeing the structure change before GPT we are, we're, we're planning on allocating millions of dollars to to AI teams to build these single language models.

But with the with the insurgents of these large language models, it really makes more sense now to instead put those same resources into sort of product focus engineers. Specifically with the chat, we've seen this like this huge like UX problem, this part of this huge UX sort of surgence of how we interact with the models, right? And that sort of like is, and that sort of seems like what's becoming the bigger issue here is not so much the data science and the machine learning, but how we apply that with product focus engineers. So what we're doing here is really allocating more budget to product focus backing engineers as opposed to machine learning teams really, or generative machine learning teams. Sorry, there's still machine learning needs outside of the, the generative tech aspect.

Heather Wishart-Smith (33:33):

Terrific. Thanks. Srinath, what are some of the shortcomings of foundational models today and how do you think it'll change in the future? Kind of at a high level? not as try not to get too technical here.

Srinath Sridhar (33:47):

Sure. I think Mary said that it was a pretty technical audience, so hopefully I can do a little bit on the technical side for them and little bit on the higher side. And so we can go a little bit into the weed. So basically if you're working with OpenAI today, there are basically three levels that you have, and Krishna briefly alluded to this as well. one is prompts, one is fine-tuning and one is embeddings. So those are the three things that you can work with today. And Krishna you know, talked about fine tuning a little bit more, but there is at least one fundamental problem with fine tuning. And in fact, two problems with it. First is we have regressed in fine-tuning because you know, Davinci based model came out I wanna say in 2021, and that was the last model that you could fine-tune.

After that came the InstructGPT series with text-davinci-003, you could not fine-tune it and then came ChatGPT. So GPT-3.5, you couldn't fine-tune it, GPT-4, you couldn't fine-tune it. So your choice is that, let's say that I have a bunch of data, I have a bunch of copyright material, I have a bunch of copyrighted images. My options are to use a year and a half old model that I can fine-tune in a fast moving space or to actually use the latest models, but I have to use all the data that OpenAI used and I cannot use my own copyrighted data. So that's a little bit of a challenge because we've actually regressed on that ability to add ability to add my own corpus of data to create my own model. Second problem with fine-tuning I see today is you can only give it positive examples.

You cannot give it negative examples. And it's a huge limiting factor because if you look at the way ChatGPT was trained from GPT-3 based models, they actually took real valued weights for each sample. So you can actually say, here are three sample inputs and sample outputs. I can rank order them into the best, second best and third best you can actually come out with real valued results for it and feed it back into fine-tuning. That ability is not exposed to the public and it's a pretty crucial ability to be able to mark something as positive and negative because we can only mark stuff as positive right now. Last is embeddings. and embeddings along with prompts is today the solution for the lack of fine-tuning. Because what the answer seems to be is to say I would take the documents, I would find the closest documents using embeddings, I would put that closest document into my context window, which they have completely kept increasing.

And then I have my prompts and I have my completions based off it. This seems like a hack to me that is very short, short-term. I think eventually this API, doesn't look like this at all in my mind because if you look at all the APIs we have built with databases and so on, you don't pass the set of documents when you do the search query. I mean, the database index is built ahead of time when you do the search query. So very similar to what Krishna was alluding to, the API is going forward, that's going to look like in my mind is very, very clear. The API in my mind would look like a model that OpenAI can host if they wanted to. You have the ability to add a document to the model, you have an ability to remove the document from the model goes back to Krishna's point on the fact that when you remove a document from model, you wanna remove all the training that was done from that sample.

And then you have the ability to fine-tune with both positive and fine-tune with the weights. So positive and negative examples and then embeddings are actually embedded into the model itself. So you just do prompt completions from the model. So I don't think you can go from prompts being 4K to 32K to 1GB and get away with it. So that's how I see the OpenAI and foundational model API is changing. And it seems pretty clear that that's the direction it would have to go. And if OpenAI goes that direction, that's great, otherwise there is a gap for somebody else to fill.

Heather Wishart-Smith (37:51):

Terrific, thank you. Quick question now for Payel from Deepak from the audience. I do want to remind everyone that the Slack channel is open all day, so please don't hesitate to to go and reach in and post your questions there as well to chat with some of Fiddler's data scientists, but Payel from Deepak, could you shed some light on inter interpret interpretability and explainability of generative AI in high stake settings like healthcare?

Payel Das (38:19):

That's a very good question. Yeah. So in the context of generative AI being these foundation models, right? So we have seen that language models can also not just output, but also can say that what was the path it took in form of chain of thought, right? How it got to the final answer. So one can adopt the same thing. Like if we are trying to come up with, let's say, a treatment recommendation, then the model can provide, you know, the chain of thought or chain of reasoning that how it came to the final recommendation. The problem with any sort of interpretability or explainability is that past, in this particular case scene of thought, we don't know if that was the exact path the model took to come to the final answer or not. And second, how do we know that is the best path or the best, you know, explainability or the best interpretability.

So here, there is no way to go without having the experts in the loop. Like if we are trying to say that, you know, if certain drug can have adverse effect or not, the only way to make sure that look into the historical data and see that, you know, if my model, my foundation model, my generative AI model is capturing those historical data points well in the model, and so it's consistent, the explainability is consistent with the known data. Or if I create new set of explanation and then, you know some expert s that yeah, it looks sensible to me, it looks like, you know, it can happen because of the prior domain knowledge, then I think, you know, that explainability interpretability aspect makes sense. The bottom line here is that a model can provide explainability at the input level at the model in our working level, but unless we bring the domain knowledge, the SME into the loop, there is no way to make sure that explainability makes sense.

Heather Wishart-Smith (40:00):

Thank you. Going back then to, I'll start with Casey. How do you handle data privacy issues? This is another question from our audience.

Casey Corvino (40:08):

Yeah, so obviously we have our, we're in process of getting our SOC2 Type 2 right now, GDPR, all those certifications and make sure that we're being as secure as possible. But the biggest thing there is, obviously this goes with any big data company, but we try not to save anything that's, we try, we try to reduce what we save that's sensitive. And if we have to save some, save something that's sensitive, we're always de-identifying. We're always encrypting, hashing, et cetera. But the main thing there is, again, if we don't have to save it, we don't save it specifically with emails. We don't even save emails. and if, and for the odd chances of saving blocks of text, it's always de-identified with industry standards and secured with a 99% higher accuracy.

Heather Wishart-Smith (40:47):

Terrific. Thank you. Srinath, any response to that from you?

Srinath Sridhar (40:51):

Almost precisely the same, to be honest. So I can save people time. also GDPR software compliant, exact same thing on the data scrubbing side. I would say, look, look this, this problem has been faced before. For example, take a look at Grammarly, right? Everything that you type in Grammarly is at Grammarly. And so this problem has been faced before in this manner at least the bias problem is a very different and new problem for sure.

Heather Wishart-Smith (41:21):

All right, thank you. Well, we just have a couple of minutes, so I'd like to give each of you the opportunity to provide any closing thoughts. Payel would you like to add anything?

Payel Das (41:30):

Yes, I think, you know, while this foundation model are really promising, I mean not just promising at this point, they're successful, they still lack a lot of abilities that we human can do. We don't need a lot of prompt to learn something. We don't need a lot of data to learn something new. So what I would like to see as the next step is that first, how do we ensure, like, you know, if the, if a model can make sure make, learn to generate its own guardrail that is consistent with let's say a constitution or a principle framework, that's one and second, like if we can make these models a little bit more principled, a little bit in foundational in the sense that there is a theoretical foundation underlying there them, right? They can learn from small amount of data or like, you know, if there is a pre-trained model and we can only show a few new instances of a different concept, it can be able to, you know, grab it immediately.

So these are the two tracks where I do think a lot of, you know, other than the particles, right, we are gonna see a lot of realization of this generative AI, provided recommendation. That's definitely exciting. But I think on the research side or on the, what's gonna be the next frontier of AI, I think that's where, you know, the use of these models with a responsible framework. The framework, how we create, who creates it, how we implement, how we ensure, and what are gonna be the legal and a societal you know complication and implication of such risk. That's one. And second, the new architecture or new way of learning, not just model architecture, but the new way of more human-like learning.

Heather Wishart-Smith (43:01):

Outstanding. Thank you. Srinath. Any last thoughts?

Srinath Sridhar (43:05):

Yeah, I'll keep it short. What's most exciting for me is the vertical apps. So if you think you have an edge on some vertical, it's a great time to start a company and ask what does generative AI do in that vertical. Second thing that I'm excited about is what Krishna said, which is we have seen a lot of general purpose models built out. I'm looking forward to seeing models built out with private data sets and copyrighted data sets that you, yourself own, whether you're an artist or a writer, and hopefully that that boom is coming.

Heather Wishart-Smith (43:32):

Thank you so much and Casey close us out here with any last thoughts.

Casey Corvino (43:36):

Yeah, I'm just really excited to see how people build with the democratization of these large language models. Really anyone with a computer can build really cool applications, really cool AI applications now. Just hearing about some of the cool stuff happening in the prescription drug space that's literally life changing. Something I never would've thought about, but there's gonna be so many of these different, these different paths now taken that are literally gonna revolutionize the world. And I think it's all been doing in part with these democratization, can't do that word of these, these large language models.

Heather Wishart-Smith (44:07):

All right, well a big thanks to all three of our panelists, Srinath, Payel, Casey, and Mary, we'll turn it over to you.

Mary Reagan (44:15):

Yeah, thanks so much. Really interesting discussion and thank you so much, Heather, for a great job moderating and just appreciate everyone being here today.