AI Forward - Leadership Perspectives: Use Cases and ROI of LLMs

In this panel, industry leaders shared their insights on LLM implementation and their significant impact on business ROI in their respective fields. They explored not only the innovative applications and ethical dimensions of AI but also its crucial role in enhancing operational efficiency and competitive advantage, highlighting its profound societal and economic implications.

Key takeaways

- Strategic Customization of LLMs: Companies must strategically tailor large language models to meet specific use-case demands, ensuring solutions are vertically aligned to address unique industry requirements

- Defining the ROI in LLM Deployments: It is essential for businesses to articulate and define the expected return on investment for each LLM application, focusing on converting predictive models into dynamic resources that significantly enhance outcomes in critical areas, and drive economic and societal value

- Human-in-the-Loop for Generative AI: GenAI deployments in large enterprises, both regulated and non-regulated, needs human-in-the-loop oversight to ensure ethical and effective use. Maintaining a balance between leveraging AI's capabilities and adhering to stringent data privacy and security standards is crucial, particularly in highly regulated industries. This approach aligns AI advancements with corporate governance and ethical responsibilities.

- Continual Learning and Humility in AI: In the dynamic field of AI, continual learning and humility are essential. Recognizing the rapid industry evolution and the gap between public perception and the reality of AI capabilities is key, alongside embracing the uncertainty and knowledge gaps inherent in this fast-evolving sector

- Mainstream Adoption of Generative AI: The transition of generative AI from niche to mainstream technology is marked by its widespread integration across organizational workflows and its affordability, making it as commonplace and essential as search engines in business processes.

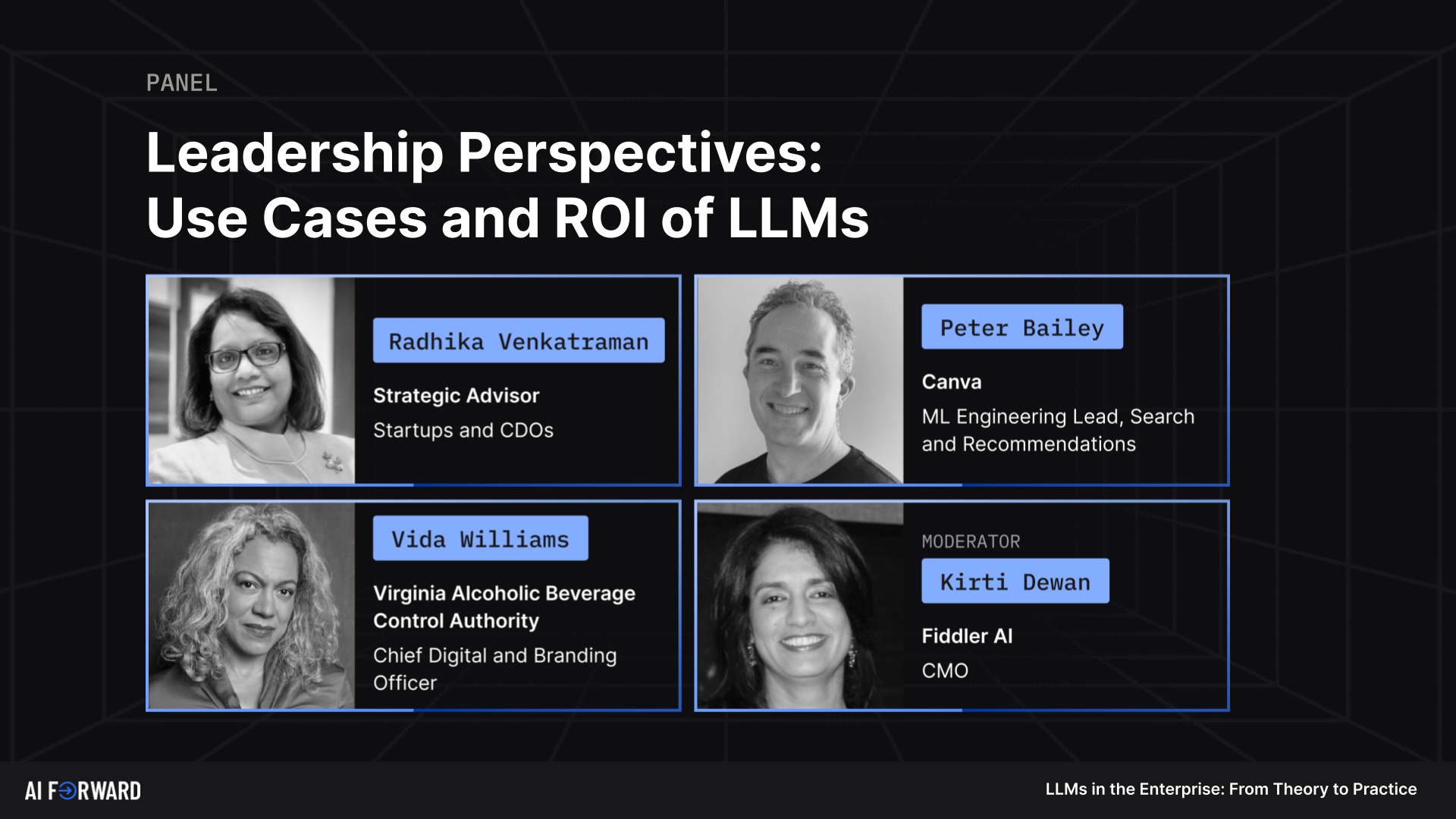

Moderator: Kirti Dewan - CMO, Fiddler AI

Speakers:

- Radhika Venkatraman - Strategic Advisor, Startups and CDOs

- Peter Bailey - ML Engineering Lead, Search and Recommendations, Canva

- Vida Williams - Chief Digital and Branding Officer, Virginia ABC

[00:00:00]

[00:00:04] Kirti Dewan: Hello everyone and thank you for joining the last session of the day and thank you for making it this far. I will be the moderator for this panel and for those who may have missed my introduction earlier on, I am the Chief Marketing Officer at Fiddler AI. I am joined by a passionate and diverse group of panelists in terms of industry, where their companies are at with LLMs and how they are approaching AI in general.

[00:00:31] Kirti Dewan: Vida Williams is the Chief Branding and Digital Officer at Virginia Alcoholic Beverage Control Authority. For those not familiar, Virginia ABC is a retailer, wholesaler, and regulator of distilled spirits. As a revenue generating agency of the Commonwealth, Virginia ABC does not rely on state funding.

[00:00:50] Kirti Dewan: Peter Bailey, joining us from Canberra in Australia, is the ML Engineering Lead for Search and Recommendations at Canva. Radhika Venkatraman is a Senior Advisor at Cerberus Capital Management and Chairperson of the Board at LiveWorth. Paida, Peter and Radhika, welcome. Thank you so much for being here.

[00:01:13] Kirti Dewan: Okay, let's jump right in. Um, this is, I'm very excited with how fun this conversation is going to be because we bring such different perspectives. All of you bring such different perspectives to the table. Okay, so question for everyone. And, um, the question is, NLP tasks, chatbots, summarizations, content generation, content reviews, there's prompting, there's RAG, there's fine tuning.

[00:01:39] Kirti Dewan: On the other side of the matrix, you have large language models, small language models, open source, closed source, internal use case, external use case. There is a lot going on across different departments, across different companies. So, where are you at What use cases have you deployed, and which ones are on the roadmap to be deployed?

[00:02:03] Kirti Dewan: Vida, should we start with you?

[00:02:05] Vida Williams: Sure. Um, because it's interesting, when we were start, when we first started talking about this, this topic, I walked into Virginia ABC, and of course they're an authority, but they're still associated with a state agency. And so the development of the practice of data is still in its earliest stages.

[00:02:29] Vida Williams: It's still in that space of defining the possibility. of the data and making sure that there is fidelity in the data. And I think what is interesting is there are more often than not, that is the place, the starting place, where many of us are having the conversation about AI. Now, I get to juxtapose that, however, with the fact that I'm an advisor on a very large scale education project that just has volumes of data, especially Post COVID data.

[00:02:59] Vida Williams: And in that particular case, we are already in the space of culling data, hypothesizing regarding the context, strategically looking at the different questions, organizing the data, running, um, using machine learning to run simulations of the hypothesis, and thus preparing for testing and training in AI.

[00:03:23] Vida Williams: And so looking at those two things. Almost daily, simultaneously, is a little bit of cognitive dissonance, especially in the context that we're in, um, as your question so, so wonderfully put, where there's a whole world of data practices happening. So, I, I'm kind of in that moment of both and, um, and some days I can even keep it straight.

[00:03:49] Kirti Dewan: Makes sense. Um, it is pretty overwhelming. Uh, Peter, how, how is it at, at, uh, Canva?

[00:03:57] Peter Bailey: Uh, I think yes, overwhelming is a, is a great description, um, uh, so in terms of the prompting with context, the retrieval, retrieval, augmented generation, and fine tuning, doing all, all of those types of, uh, deployment. So it's part of our, um, product, uh, experience with the Magic Studio release in October.

[00:04:20] Peter Bailey: For example, prompting with context is used by a MagicWrite feature. The RAG approach is used as part of our MagicDesigns feature. And fine tuning is being used as part of our MagicMedia feature. And there's, there's plenty more in, in that MagicStudio release of different things being used in different kinds of ways.

[00:04:37] Peter Bailey: Uh, and some are very much the ex Experience, um, of that feature, whereas others are sort of more embedded or supportive of an experience. Um, one other thing that we've been finding interesting as well is maybe just using, um, LLMs to sort of fast track prototyping, um, internally. So it may not actually go out.

[00:04:56] Peter Bailey: to a product experience, but allows us to explore some alternatives and ideas very quickly. And that, that's been another kind of accelerating, um, uh, enabling, uh, ability with, with the use of this technology internally.

[00:05:11] Kirti Dewan: Makes sense. On that note, Peter, between external and, uh, internal and external use case, where you just said internal prototyping versus maybe an external experience, we just ran a poll, and the audiences at about 44 percent said, say that right now they are looking at LLM applications for internal use cases.

[00:05:31] Kirti Dewan: Um, Radhika, how about you?

[00:05:34] Radhika Venkatraman: All right. First of all, thank you for having us. It's a pleasure to be here. I'll give you two perspectives, one from what I'm seeing in the companies that I advise, and a little bit of what we're doing in our own startup. From where I sit for companies that we advise, I think what I'm seeing is that almost Everybody is at least dabbling in it.

[00:05:55] Radhika Venkatraman: So there is hardly any company that is not attempting to do it. Unlike in previous rounds of technology, where you may have people waiting to see whether they want to be a slow follower or not, at least there is tremendous interest and everybody is tipping their toe. I would call them as AI tinkerers.

[00:06:12] Radhika Venkatraman: And so I would say there's almost 90 plus percent AI tinkering going on. In terms of going to production, I would say it's not that big a number, okay. I would say the marketing and the sales team and wherever you can repurpose content, regenerate content, reuse content, you're seeing a lot of early adoption.

[00:06:33] Radhika Venkatraman: But when it comes to the real core applications, such as like, you know, contact center or technician productivity, and so on, uh, people are actually attempting to build those applications as we speak, either with partners or with hyperscalers in combination with do it themselves. In my own little startup company, I would say we're looking at how to integrate generative AI for geospatial analytics at scale.

[00:07:01] Radhika Venkatraman: That's a little bit harder problem than like, you know, text repurposing, because it's not only data, it's streaming data that is geotemporal in nature. And so that's something we're just prototyping.

[00:07:13] Kirti Dewan: Right, right. Yeah, um, couple of our polls from earlier sessions as well indicated the same thing that this is in the, you know, it's between the exploration stage to having something in place already, whether it's LLMs or LLM applications.

[00:07:30] Kirti Dewan: And then, um, there was a decent level of, of a observability in place as well. But still, it's, it's not the majority, as you said, um, uh, Radhika. Um, Vida, you have been quoted as, my goal is to create an infrastructure for economic development, community engagement, and customer service in ways that provide access as well as public safety and responsibility.

[00:07:59] Kirti Dewan: In what ways do you see LLMs and generative AI helping you achieve your vision?

[00:08:05] Vida Williams: But I think for me, this is, it's a question of historical continuum. So a lot of us believe that this kind of use case for large scale data happened right now or happened, you know, in the past 20 years, you know, where, um, humans are impacted by decisions that are happening with data.

[00:08:28] Vida Williams: And I can make an entire argument that actually it was probably back in 1896 when, um, you was, was published, um, The actuarial treatise was, was published that declared who was going to be, um, who was going to be, um, eligible, right? Who was a good risk financially? Who was a good risk for coverage? Like those are the first vestiges that we get of data really looking at human, human interaction, human condition, ecological and environmental interactions, and declaring the quality of human life.

[00:09:08] Vida Williams: Now, if we fast forward and we look during the heyday of, um, we're just starting to do facial recognition, right, which is another very early, um, early, early tool set for hey, we can do this automatically. And we're looking at broad mishandling of facial recognition as it has to do with recognizing faces.

[00:09:33] Vida Williams: Um, when it looks at, um, the, the, the diversity of recognitions.

[00:09:39] Vida Williams: Now we're here and we are in just this explosive and expansive moment of we have now the volumes of data that are representing humans. And I think that that's That's what is so amazing to me is that we are now able to kind of record and see and touch and feel and experience human interaction at a very intimate level, but I don't know that we are interpreting that as an industry, that that's really what we're doing.

[00:10:10] Vida Williams: I think we might be still bordering on tech for tech's sake, like, whoa, it's exciting that we can run this model. And so my, my goal kind of in what I do and how I'm choosing products on one hand, as I just explained, I'm literally looking at the intersection of retailing, um, and controlled substances. So, when you think about that, right, retailing a controlled substance and having to intersect that with the health and safety of a community, or on the exact opposite end of the spectrum, we have volumes of data.

[00:10:44] Vida Williams: How can we more concretely look at the dynamic levers required to ensure That we're hitting educational standards, and that's a very dynamic, and at this point, because of the volume of data, we can do so with a sensitivity, um, to include dynamic lever pulling, if you will, right, decision making, but only if we're willing to take a step back and consider with a great deal of empathy and sensitivity The context through which we are looking at these human interactions.

[00:11:19] Vida Williams: If we decontextualize them, then we cannot do it at all. And especially when we're talking about large language models, we have to really consider how we use the systems of language to communicate with one another, and whether those models are reflecting all of the nuances in the languages that we use.

[00:11:39] Vida Williams: And that's just a very small sliver of that, but it becomes exacerbated after that. And so for me, how do I see LLMs and AI achieving my vision? I think it's going to depend on whether we are willing as an industry and as corporations who are innovating in these spaces to take a step back and ask who and how are we affecting who and how we're affecting them.

[00:12:04] Vida Williams: And I think that we're in that moment where we can ask and act on that question. And some of us just need to be bold enough to do it.

[00:12:11] Kirti Dewan: Right. Yeah, I think adding to this whole dimensionality is also where we talk about large and, uh, small language models, if you will, and, you know, what's, uh, within the organization and what isn't, and it's, it's as though we would need vertical specific.

[00:12:28] Kirti Dewan: Um, um, uh, applications as well, right? I know that we're talking about domain specific has already entered, um, the, the terminology, but, uh, our vocabulary, but we need to probably think about this in terms of societal good, uh, from a not for profit perspective and then, uh, from a profit perspective as well.

[00:12:50] Kirti Dewan: Yeah, so that, that's, uh, That's really interesting. There's so much that we can, we can say there. Fiddler, Fiddler's mission itself is, you know, build trust into AI. So, uh, it's really fascinating to, to hear everything that you just said. Um, on the, uh, on the top three metrics, so moving on to, um, the ROI piece of this panel.

[00:13:12] Kirti Dewan: So, in terms of metrics that you think about, um, that are important to your organizations with respect to LLM use cases, what are the top three that come to mind? And how do you define ROI for these use cases? Peter, how about we start with you?

[00:13:33] Peter Bailey: Uh, yeah, fantastic question. Um, the, uh, like Canvas mission for those who don't know it is, uh, to empower the world to design.

[00:13:42] Peter Bailey: So we look at metrics broadly that are indicative of that, um, that mission. So Are we succeeding in the number of publications that people are able to complete and the levels of product engagement more generally? In terms of ROI, I think it's more complex, especially at this early stage of design AI. We do look at things like utility metrics and the search discovery parts of the user's design journey, which I'm sort of specifically familiar with.

[00:14:11] Peter Bailey: And we can think of this broadly as kind of a trade off between success versus effort. Uh, and there's also more subtle questions about people's sense of satisfaction or delight or conversely frustration or annoyance when things go wrong. Um, and it's, it's just not really always easy to quantify these more sort of subjective measures, you know, kind of, um, at scale manner.

[00:14:33] Peter Bailey: So, yeah, I think it's sort of deeply early stages, but overall we'd, we'd be saying, are we finding these, um, technologies additive? Um, overall for the customer experience and their ability to get their designing, um, goals met.

[00:14:54] Kirti Dewan: That sounds, uh, that sounds great. We have questions coming in from our poll where the top three metrics that are Tying for first place is cost savings, operational efficiency, and innovation and competitive advantage.

[00:15:12] Kirti Dewan: So our question was, what are the top three ROI areas that would be most beneficial to you? Um, Radhika, what, what, what are your thoughts on this question?

[00:15:25] Radhika Venkatraman: I, I will not repeat what was already said in the poll, I think most people would be thinking about ROI not very differently than any traditional ROI.

[00:15:34] Radhika Venkatraman: But I think there's a few different things I would like still keep in mind, right? I recently heard a professor that said, uh, one person's automation is another person's augmentation. And so what does that really mean? Basically, if you are able to take, uh, the new and the not so good workers and make them all very good.

[00:15:55] Radhika Venkatraman: Then you should, by definition, get productivity. Now, if you're very good, you may be a little bit upset that you made everybody else as good as you, but in general, I think, uh, you know, this could be a great equalizer. Does that mean you get more productivity or you get more output and therefore you can get more revenue?

[00:16:12] Radhika Venkatraman: I think that's a very open and big question, if you will. Uh, the other thing I would say is, look, throughout humanity, whether you take, like, the first industrial revolution or where we are right now, uh, Every time a new technology was introduced, there was always a big brouhaha that, like, you know, there's doom and gloom.

[00:16:31] Radhika Venkatraman: But the economic study of every revolution has shown that the new technology has more good than evil, if you will. So if you believe that to be true, I think the same could apply now too.

[00:16:47] Kirti Dewan: That's a, that's a great take and I, I love the line that you just shared, uh, uh, from the professor. I'm going to start using it as well. Um, Vida, how about yourself?

[00:16:59] Vida Williams: So I'm going to take this one slightly different because I, I tend to be on innovative products. Um, and so, right now, I would say in the, in the area of education, the biggest use cases that we have before us are as follows.

[00:17:15] Vida Williams: Um, we are very adept at predicting the success or not of a student's pathway. That, those models, I think, have been minted. What I am fascinated by is the potential for the volume of data that we now have available to us to show us dimensions wherein that prediction can be altered or changed, right? And I think that that is, to me, the big success or the ROI on that, because if you can actually If we can change the model from being a hardened prediction to being dynamic based on dimensions, especially in the areas of education and healthcare, then we can actually increase the quality of, of, of life, we can increase the quality of experience, we can increase the quality of X, Y, Z, right?

[00:18:12] Vida Williams: And so, for me, especially in this particular case, it is, the, the ROI is determining that we can figure out what dimensions, which features in their composite could actually change a prediction from. Being providence to being a dynamic set of pathways. And I think that that is the evolution of personalization, right?

[00:18:40] Vida Williams: And it's an evolution of personalization that takes the mitigation of bias that we've had to deal with for so long. It moves it to productive understanding of context. And I think those things together, while very complex, are going to give us a new scale for return on investment, um, especially in core areas like education and healthcare.

[00:19:05] Kirti Dewan: Yeah, that's, uh, that's super interesting. There's so much value to be seen across just a multitude of industries and how it can shape humanity and accelerate humanity's values, not just progress and innovation, but actually values as well, right? The actual values that we, that we, that we hold dear to our hearts and how we, what we want to pass on as well.

[00:19:32] Kirti Dewan: There's a nice question, uh, in the, from the audience. So, uh, speaking of ROI for LLMs, in your own businesses, did you build an LLM or use available models and integrate them? And it's a two part question. So how did you make the decision of whether you were building your own or you were basically going to buy?

[00:19:53] Kirti Dewan: So is it build or buy? And are you building an LLM that's available or using available models and integrating them? I think, Peter, this would be a nice one for you.

[00:20:08] Peter Bailey: So certainly to date, we've been buying rather than building. But, uh, the sort of trade off between looking at, um, where the value lies in the sort of specific use cases for what we're seeking to do and the availability of, um, appropriate data to build that in the context of design AI is stuff that we continue to, uh, investigate deeply.

[00:20:36] Peter Bailey: Probably won't speak much more than that, um, on this one.

[00:20:41] Kirti Dewan: Um, Radhika, any thoughts from you?

[00:20:44] Radhika Venkatraman: I think the notion of build is actually more complex when it comes to generative AI than it has been in previous versions of technology. Part of the reason is you, the cost of training, the cost of inference. And so it requires like tremendous amount of expertise everywhere that I see people are.

[00:21:03] Radhika Venkatraman: So, I think there are a lot of companies that are buying, or rather I would say trying, many, before they hone in on who to buy or what to buy. But I have not seen too many companies say they want to build. There are a handful, and I won't name them over here, that we all hear about. They are very large companies.

[00:21:21] Radhika Venkatraman: They generally have the ability to do many years of patient investing. But if you're anything less than very large, I think people have been looking to buy.

[00:21:33] Kirti Dewan: Right, right. Yep, makes sense. Um, Vida, how, how is it working out at Virginia ABC?

[00:21:41] Vida Williams: So, Virginia ABC, we're not close enough to the Quandary, is what I would say, to have made that decision to use or buy.

[00:21:50] Vida Williams: What I would follow along with the comments that have been made is, is buying is probably going to be where we would start that process. Um, the question then becomes, which aspect? Are we looking at harvesting, right? Is it controlled substance? Is it community health and wellness? Is it retailing? Is it some cross dimension of them all?

[00:22:16] Vida Williams: Um, so, so that's where I would, I would suggest, that's where we would start there, um, pretty clearly.

[00:22:24] Kirti Dewan: Great. Uh, Peter, one of the things that, um, you said a few minutes ago was, uh, one of Canva's, uh, values, uh, is to be a force for social good, and to What impact does it have on Canva's capabilities when users opt out of having their data used for training?

[00:22:50] Kirti Dewan: And so, how do you balance the need for high quality data with the ethical considerations, right? Right, I was talking a little bit about that as well. So, how does Canva balance the need for high quality data with the ethical considerations around data privacy and user consent? And

[00:23:08] Peter Bailey: Uh, so Melanie Perkins, our CEO, put it really well recently, um, uh, saying that we need to ensure all our AI is ethical and also feels ethical, and we believe in giving our customers control over their data.

[00:23:25] Peter Bailey: And the privacy of that data, and also that it is possible to create world leading AI capabilities with that privacy and consent in place. And so we've rolled out those, those customer controls. Um, we're also working with our creators community, uh, who provide huge amounts of content, um, that populate Canva's, um, uh, template and media libraries, um, to, to make sure that we can fairly compensate those that consent to having their content used to train our AI systems.

[00:23:54] Peter Bailey: Uh, we won't train. AI models on creator or user design content without their permission, period. And our hope is to be able to be very global here so that we can be representing many different languages and cultures and ways of approaching design for visual communication. So I think it really becomes a question of, um, what data, um, do we need for specific first party ML model training or fine tuning of third party ML models?

[00:24:21] Peter Bailey: And then do we have enough of that? And if not, how should we go about obtaining it? Um, you know, there's nearly always ways to obtain data, but it's a question of how you go about obtaining that data and how you compensate and communicate it. One thing that's been pretty exciting, I guess, over the last two, three years...

[00:24:38] Peter Bailey: Um, and I sort of, uh, uh, worked on this at Microsoft as well, is this emerging recognition of the ability to use multimodal LLMs to both create synthetic data and annotate it at a level that's pretty much compatible to, um, human annotators for at least baseline quality for model development and evaluation.

[00:24:57] Peter Bailey: And again, that's for particular kinds of tasks that are amenable to this approach. So, you know, not all tasks, you can get that kind of, um, synthetic. Creation. Uh, and some of my old colleagues from Microsoft actually recently wrote a paper about this, which is up on archive, if you're interested in reading up more on this topic.

[00:25:13] Peter Bailey: So certainly in the kind of information retrieval, um, relevance annotation world, I think there's kind of emerging recognition. It's, it's a doable thing to get really high quality annotation.

[00:25:24] Kirti Dewan: Nice. Uh, do you know the name of the, uh, paper, Peter, because we could post it so that our audience can look it up.

[00:25:29] Peter Bailey: I'll dig it up and post it for you. Yeah, I can, I can find it quickly.

[00:25:33] Kirti Dewan: Okay, great. Um, so yeah, I guess the trust and safety team at Canva is really, uh, staying busy, right. With, uh, with, with everything that they're working on your different product lines as well. I think there's Magic Studio and then also Canva Creative, as you said.

[00:25:48] Kirti Dewan: Um, follow up question, Peter. Uh, from the audience, how do you think about data and business model privacy given BY may also be sharing slash leaking aspects of business?

[00:26:03] Peter Bailey: Yeah, I guess, like, you know, you obviously want to think carefully about your, um, contractual relationship with your, um, uh, model providers and be really clear about what the terms and conditions of the interactions look like.

[00:26:18] Peter Bailey: Um, so, uh, that's a, that's a kind of, um, you know, risk, standard contractual risk that you need to understand and, and be clear about, um, certainly something we take very seriously. Uh, so. Yes, there are risks, but I think they're manageable.

[00:26:39] Kirti Dewan: Got it. Um, Radhika, you have worked at mega large companies, right?

[00:26:46] Kirti Dewan: Credit Suisse, Verizon, uh, to name a few. You have, uh, you, you're consulting with, uh, uh, uh, startups. You are advising other companies as well on the AI journey. So for these large companies, whether they are regulated or non regulated, How are they looking at generative AI in the context of their applications, whether it's mission critical applications or just business critical applications?

[00:27:15] Kirti Dewan: We saw, we've seen throughout this summit and from the poll that just happened, uh, that organizations right now are still looking at the internal use case. So, whatever these, the context of these applications may be, how are they looking at this?

[00:27:28] Radhika Venkatraman: Right. So, number of things, right? First of all, if you're a large enterprise, then some of the topics we touched on.

[00:27:35] Radhika Venkatraman: Data privacy becomes extremely important, having guardrails around. Risk and compliance and all of that becomes important. Nobody is allowing any application to go to production without at least a human having validated it. So it has to be some kind of a human oversight for it. But equally important is when they start buying models, they need to be able to, uh, you know, Be able to trace, if you will, copyright.

[00:28:01] Radhika Venkatraman: Otherwise, like, what is the indemnification around that itself? The most important question that keeps coming up is nobody who is running a large application that is affecting their mission critical processes wants to send all of their data. outside their security perimeter. So when that starts to happen, the question is, do you run it in a VPC dedicated to you, or do you start to run it on premise?

[00:28:28] Radhika Venkatraman: So you may be buying somebody's model, but you may still be wanting to host it on prem. So for the peripheral use cases, which does not require a lot of customer data, PII data, and all of that, people are still comfortable using cloud based. Applications without such strict rigor, if you will, but if it's healthcare, if it is financial services or telecom, they're all looking at VPC as well as, uh, you know, on prem deployments with a lot of ability to trace not just training data, but also making sure none of their data goes into the base model itself.

[00:29:12] Kirti Dewan: Yeah, that makes, uh, that makes a lot of sense. I think it was in, I can't remember if it was in Lucas's session or not, or it was in another session, but, uh, Lucas is the CEO of Weights and Biases, and he was talking about how when he speaks to, uh, executives, at least in very tech forward companies, they are still quite skeptical.

[00:29:33] Kirti Dewan: of how, um, LLMs could definitely, you know, be, be helping them. And it's in these very large enterprises where you see that these use cases are really emerging. And, uh, the use cases are emerging. Sure, you have to balance it with all these other concerns as well. But, uh, there is definitely that drive to move forward with innovation.

[00:29:55] Radhika Venkatraman: Yeah, I mean, this is probably where, like, companies like Fiddler could be really helpful. Even when you have models that are not Gen AI, one had to regularly do model risk management and all of those compliant features. You got to share with the Fed and the SEC and everybody. So with LLMs, I cannot imagine people just saying, I just ran it for my risk processes and don't have like the full traceability of it, you know.

[00:30:20] Kirti Dewan: Right, right. Yep. Um, what are, what are some, um, use case implementations? This is an audience question. Um, which use cases implementation will indicate that generative AI has turned the corner from hype into mainstream? Radhika, any thoughts?

[00:30:41] Radhika Venkatraman: Um, to me, I think there's like two different indicators. One is whether you can start to embed AI in all your processes.

[00:30:49] Radhika Venkatraman: Like when we started to talk about AI, ML, and so on, even before GenAI, it was always like one center of excellence and one department doing it on behalf of everybody until everybody started implementing some level of Machine learning in each and every one of the processes, whether it is front office, middle office, back office, it did not matter.

[00:31:11] Radhika Venkatraman: So that level of ubiquity I think will start happening with generative AI being embedded in the workflows. And the second is it's not just going to be these very large companies. That can afford it. It has to become enough affordable that everyone can use it. Because right now we've all seen the product market fit from a consumer perspective.

[00:31:31] Radhika Venkatraman: You can ask ChatGPT, it'll give you answers, but is it that ubiquitous when you go to work? Can you ask something in your company and get all of your answers? It just has to become like search, in my opinion.

[00:31:45] Kirti Dewan: You're right. Yeah, uh, Krishna actually brought that up in the same chat with Lucas, that LLMs are a little bit akin to, uh, to the, to the search model in its early days.

[00:31:55] Kirti Dewan: Um, uh, Vida, you would have a, you would have a very interesting perspective, I would imagine, on this, on this question. Would love to hear your thoughts.

[00:32:06] Vida Williams: I was going to say, I, I, because I appreciate it and, and loved Radhika's And I think from Veronica's perspective, I think mine is almost at the other end of the spectrum.

[00:32:15] Vida Williams: All of those things have to be true that she said, and we have to see and acknowledge that there's an entire generation doing something different with it. Um, because that is how we're going to move from it just being. Search capable to actually being able to see full scale innovation, automation, and things that we could not have predicted.

[00:32:38] Vida Williams: Um, and so it's interesting if you just do a quick search on Google, uh, YouTube or whatever your search mechanism is and you go, Hey, how do I do X, Y, Z in AI or ChatGPT or whatever you want to say. And you start seeing 16 and 14 year olds show up to give you a tutorial. Now we're starting to know that we're heading towards a different design aesthetic, right?

[00:33:03] Vida Williams: So, yes, all of the corporations, all of the innovators, all of us with gray hair have to set a very firm foundation from which we're gonna, we're gonna really use AI, however, it's going to be watching what is happening in the generations before, you know, that we are mentoring, that we are mentors to, and seeing how they are using it.

[00:33:28] Vida Williams: Um, and I'll just, I'll end with this. When I saw a 16 year old ish boy in 15 minutes, right, a cancer diagnostic tutorial in AI, I knew exactly that we have not even tipped the iceberg of what we're actually going to be able to do with this technology. So yes, and for me, it's that.

[00:33:54] Kirti Dewan: Right. Being really responsible about it, right? Um,

[00:33:57] Vida Williams: Oh, that's definitely.

[00:33:58] Kirti Dewan: Absolutely. Absolutely. Like it has to be embraced as a cultural value value within organizations, but it's nearly like it needs to be embraced as a societal value.

[00:34:07] Vida Williams: Yes. And I have another ending segment for that, but I'll stop there.

[00:34:14] Kirti Dewan: Go ahead, Vida, if you, if you want to, we have time, so if you want to say a few more words.

[00:34:19] Vida Williams: Because I think right now we are kind of locked in, in the corporate aspect of, it's almost, it's almost reminiscent of the 1990s tech for tech boom. , right before we realized that technology itself wasn't a business model, that just because you could do it didn't mean you were gonna be able to make money out of it.

[00:34:40] Vida Williams: Um, and so we had to go through that, I think, and we had to learn through that before we could get to where we are now, which is using technology ubiquitously, you know, in order to be able to undergird all of the industries. I, I think data's in that space as well. But the difference, I think, the slight difference, or maybe it is a clear difference, is that if we do not fully appreciate that people are willingly, sometimes unwillingly, giving you their interaction models from which you're making decisions using AI or other technologies, then we're misunderstanding that we're making decisions that also feed back in.

[00:35:26] Vida Williams: In that feedback loop against them or impact them. And so I think when we're looking at the Algorithmic Accountability Act of 2019, when we're looking at the current executive order that Biden just signed, we need to, from a societal perspective, really advance our definitions of these terms, adapt. A bill of rights akin to the Hippocratic Oath and make sure that those of us who are in industry understand that we are working with human interaction data, with environmental impact data with those things so that as we're building, as the 16 year old is, is doing these models that they are, they're doing so already conversant in accountability, responsibility, et cetera, um, to do no harm.

[00:36:16] Vida Williams: Um, so, so that's, that's where I think we... who are setting the foundation need to put our focus so that they, as they're coming up, have a firm foundation from which to build what is going to be magical for a long time, I think, before we become scientific again.

[00:36:37] Kirti Dewan: That's a great word, magical. I like that. And yes, businesses have to have to take the lead in as well, right, in defining what this responsibility is going to look like.

[00:36:48] Kirti Dewan: I think so. Yeah. Okay. So, um, uh, a question for all of you on your AI journey. Um, what is the one learning and or piece of advice, um, whether it's technical business, whatever you, whatever you think stands out the most, um, you would like to share not only with the audience, but also with the fellow panelists here.

[00:37:16] Kirti Dewan: So, uh, Radhika, how about we start with you? Okay.

[00:37:22] Radhika Venkatraman: Um, I think there is quite a lot of fear around AI, but my belief set is that AI assisted humans are going to be better off than non AI assisted humans. And therefore, like, don't wait to get started. Be that inspiring leader who can unlock discretionary effort of everyone around you.

[00:37:42] Kirti Dewan: Love it. Vida?.

[00:37:47] Vida Williams: I would say to remember that engineering and technology and data is an art form, it is a creative medium, and that is why it's forming the basis of innovation today. And so remain insanely curious, remain exceptionally humble, but try it all and fail furiously fast so that we can learn that lesson and get to the actual, you know, right answer, whatever that might be.

[00:38:17] Kirti Dewan: Yep. I love it. Peter, your thoughts?

[00:38:20] Peter Bailey: Uh, possibly the thing that I keep continuing to learn, I think it's a little bit similar to Vida's, is that the more I learn about anything, whether it's like responsible AI or generative LLMs, or how products get defined, delivered for real versus, you know, public perceptions of them.

[00:38:36] Peter Bailey: The more I just continue, I realize I have even more to learn. Uh, moving to Canva from Microsoft a couple of years ago really felt like things better a lot. Uh, but then seeing what's going on in the industry in the last 18 to 24 months with AI, I just feel like the entire tech industry and the business community and the general public are all just on this.

[00:38:55] Peter Bailey: It's a very accelerated, um, learning, um, path at the moment. Um, and it's so, it's like the, the, the finding out of that is like, it's okay to feel like you don't know everything, um, or even much of anything. Um, there's a, there's a great, uh, quote from Satya Nadella, which I'm going to paraphrase, which is actually, it's better to be able to learn as much as you can rather than a know it all.

[00:39:19] Peter Bailey: Um, and just kind of closing on that one, one of the things that struck me is like, You know, for those of us who work with this technology, we often know a lot about it, and we make assumptions that other people have the same, um, perceptions that we do, and the same, a lot of, a lot of learning. And this was really brought home to me, um, hearing a comment from one of our, uh, our team members, uh, reflecting on some customer feedback where they were complaining about some generative AI imagery that, um, was showing up for them, um, and saying, why would you have this in your content library?

[00:39:55] Peter Bailey: And they clearly had made no distinguishing between what was in our library and what was being created on demand for them. So we knew that there were differences here, but they basically had no perspective that these were different things. Um, and, you know, that's where I think this, this sense of like, The AI changes that have been, um, coming over the last, um, couple of years, are they ubiquitous?

[00:40:22] Peter Bailey: No. But a big section of society already has no perceived distinction between what's an AI output and what's just other output, and I think that's a real, you know, risk and challenge for all of us as we go about, um, building products and delivering services in, in society is that many people won't make the, um, the discrimination between the two.

[00:40:45] Peter Bailey: So yeah, uh, that's, that's a challenge.

[00:40:51] Kirti Dewan: Yeah, that really is a great point, right? It's social, um, it's social media times search times huge multiplier effect. Um, so Peter, on that, on, on, uh, on that note, there is a audience question on safety, on how safety is nuanced and how do you describe it, or can you shed light on when.

[00:41:22] Kirti Dewan: LLMs are preferable and when predictive AI models are preferable for addressing trust and safety challenges?

[00:41:31] Peter Bailey: Uh, yeah, I think it's a, it's a, it's a really great question. I'm actually going to kind of, um, quote Krishna Barrett, who talked at a SIGIR conference when this is several years ago. So predating most of the LLM emergence.

[00:41:49] Peter Bailey: He was asked about Google and, and how they really sort of thought about what they had to do in the context of delivering search results for, you know, billions of people on the planet all the time. And he said it came down to this sort of trade off between popularity and authority. And I think LLMs are in some senses an embodiment of what's popular from the data sets that they've absorbed and sort of generative ability to create new content that effectively is popular within the input context.

[00:42:18] Peter Bailey: Embedding space, um, where you need authoritative content as the output, then I think, you know, there's a stronger requirement to think really deeply about where and how you might integrate LLM technology, because You don't really have a guarantee that it's authoritative if you're just relying on the LLM and, um, the kind of responsibility comes back to you, the product or service, uh, delivery owner.

[00:42:48] Peter Bailey: To, to question really carefully, how critical is my answer or my content needing to be authoritative? Um, you know, if. I'm sure Vida has this all the time. If you're delivering health information, you're delivering alcohol advice, et cetera, you need to be authoritative. You can't just make it up, or you really shouldn't be making it up.

[00:43:08] Peter Bailey: So I think that's, that's the, that's the, um, my, my take on this in terms of a safety trade off.

[00:43:17] Kirti Dewan: Yeah. I love, love the, love the dimension, um, that you used, uh, Peter, that's really great. Um, we could talk about this so much more. We could. This would be another hour of discussion, I feel, in terms of, uh, the things that we already, that all of you shared over here, and those were quick answers.

[00:43:36] Kirti Dewan: I know everyone had to keep it under three minutes, but I feel if we were to do this all over again, we could even have an hour or two of discussion. So it was really awesome, lovely to hear your perspectives. Thank you so much for joining. Uh, we are at time, um, so thank you, Vida, Peter, and Vadika. And with that, we conclude the AI Forward Summit.

[00:43:58] Kirti Dewan: It was a pleasure hosting each and every one of you.