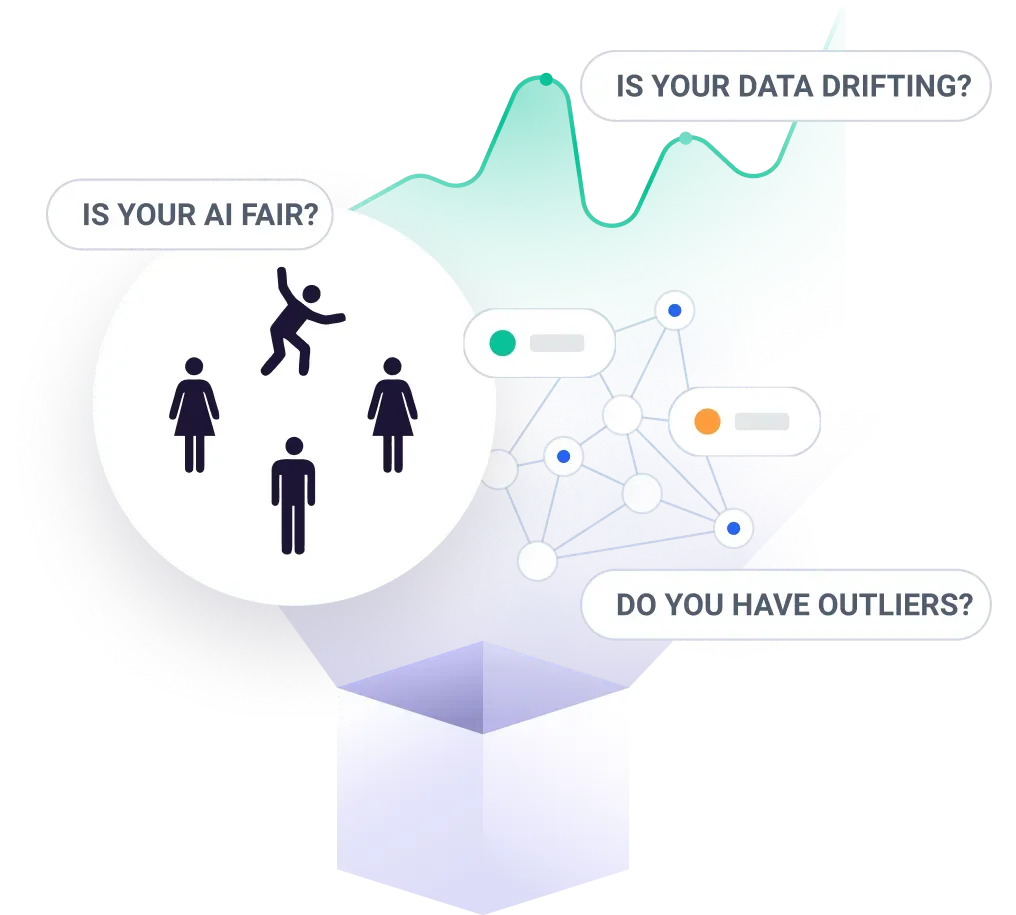

Today, at the VentureBeat Transform event, we launched our ML Monitoring feature set, inclusive of data drift detection, outlier detection, and data integrity. These capabilities are coupled with Fiddler’s industry-leading Explainable AI Platform to efficiently and effectively explain, analyze, and resolve MLOps production monitoring issues.

Challenges in MLOps Monitoring

AI adoption is accelerating, with one in ten enterprises currently using ten or more AI applications and 75% of businesses expected to shift from piloting to operationalizing AI by 2024. This trend has only been amplified by the pressure from Covid-19 to adopt automation to help cut costs. But the complexity of deploying ML has hindered the success of AI systems. Even beyond the challenge of amassing the right data to train models, model deployment and management present similar challenges to those that plagued software prior to the arrival of DevOps Monitoring.

Challenge 1: Unreliable Inputs: feature drift, errors, and outliers

Models are trained on historical data with the hope of generalizing to future examples. Unfortunately, trends and thus the data received by models often change, which in turn affect model performance. When such data drifts occur, data scientists must decide whether to act, often by retraining the model, or do nothing. Assessing the impact of a drift can help inform this decision, but today’s tools often inhibit the ability to attribute drift of one or more features to a shift in the model’s predictions.

Moreover, complex and brittle feature pipelines are liable to break at any moment. These breaks can range from virtually no effect to the model to a complete loss of functionality that causes the model to error out. Common types of feature pipeline errors include null or missing values, type mismatches, and range anomalies. Rapidly identifying and addressing these errors is paramount to ensuring reliability for deployed AI systems and the downstream applications and services they power.

Finally, deployed AI models may encounter seemingly valid data points containing values that fall outside the range of values within the training set. Detecting these outliers is important to ensure optimal performance of models and also best serve those who are impacted by these models. A decision to deny a loan to a fully viable applicant because the model detected this applicant as an outlier is bad for both the organization as well as the consumer. Today, such outliers often require anomaly detection systems on model inputs to identify.

Challenge 2: Uncertain feedback

After training a model, practitioners use the model’s target to assess the model’s performance. Production models, however, might not have access to the real-world results of their decisions until long after they’ve been made (for instance, a loan application system might not be notified of a default for months after the loan was approved). In this case, model metrics like AUC, precision, recall etc. cannot be calculated to assess real-time model performance. In the absence of live ground truth, ML practitioners must turn to proxy metrics, like prediction scores or intermediate model decisions, for model performance.

Challenge 3: Tedious debugging

In addition to merely tracking the aforementioned metrics and identifying when anomalies or issues arise, MLOps teams must then debug the issues as swiftly as possible. Given the complexity and black-box-nature of many AI systems, attribution of the symptom to its underlying cause--ie root cause analysis--is often incredibly difficult. If a specific feature is exhibiting issues, it may be straightforward to check the code and upstream systems used to generate that feature for changes or errors. But if the model’s overall performance begins to degrade, where do you start your investigation?

Explainable AI + ML Monitoring = Comprehensive & Actionable MLOps

Today, at VB Transform, we launched our ML Monitoring suite, to enable businesses of all sizes to monitor, explain, and analyze their AI in production and build more reliable, performant, and trustworthy AI models. Here’s an overview of capabilities available for use:

Drift Detection

With Fiddler’s drift detection capabilities, businesses are continuously alerted to changes in model feature or prediction distributions from their training baselines. This enables them to determine when it’s time to retrain models based on the impact of changes. Additionally, Fiddler attributes these changes to the underlying features causing them using AI Explainability. This enables practitioners to understand the ‘why’ and ‘how’ behind their model’s behavioral changes for faster problem resolution.

Data Integrity

Data inconsistencies can often go unnoticed in deployed AI systems. With Fiddler, teams can easily detect feature errors like missing values, type mismatch, and range anomalies, thereby reducing overall issue resolution time.

Outlier Detection

With the ability to detect outliers or anomalies in model predictions and features, users get a bird’s eye view into all anomalies to ensure they are catching these accurately and immediately. This can then be coupled with Fiddler’s AI explanations to quickly observe how the model is treating these outlying points.

Real-Time Alerting & Explainable AI powered debugging

Alerts are critical to ensure teams catch things as they happen. Fiddler allows you to configure alerts for changes to model performance, prediction and feature drift, and service health, to be notified the moment something changes. When coupled with Explainable AI analytics, users can quickly identify the root cause of issues and troubleshoot them appropriately

If you'd like to learn more about the release, contact us to talk to a Fiddler expert!